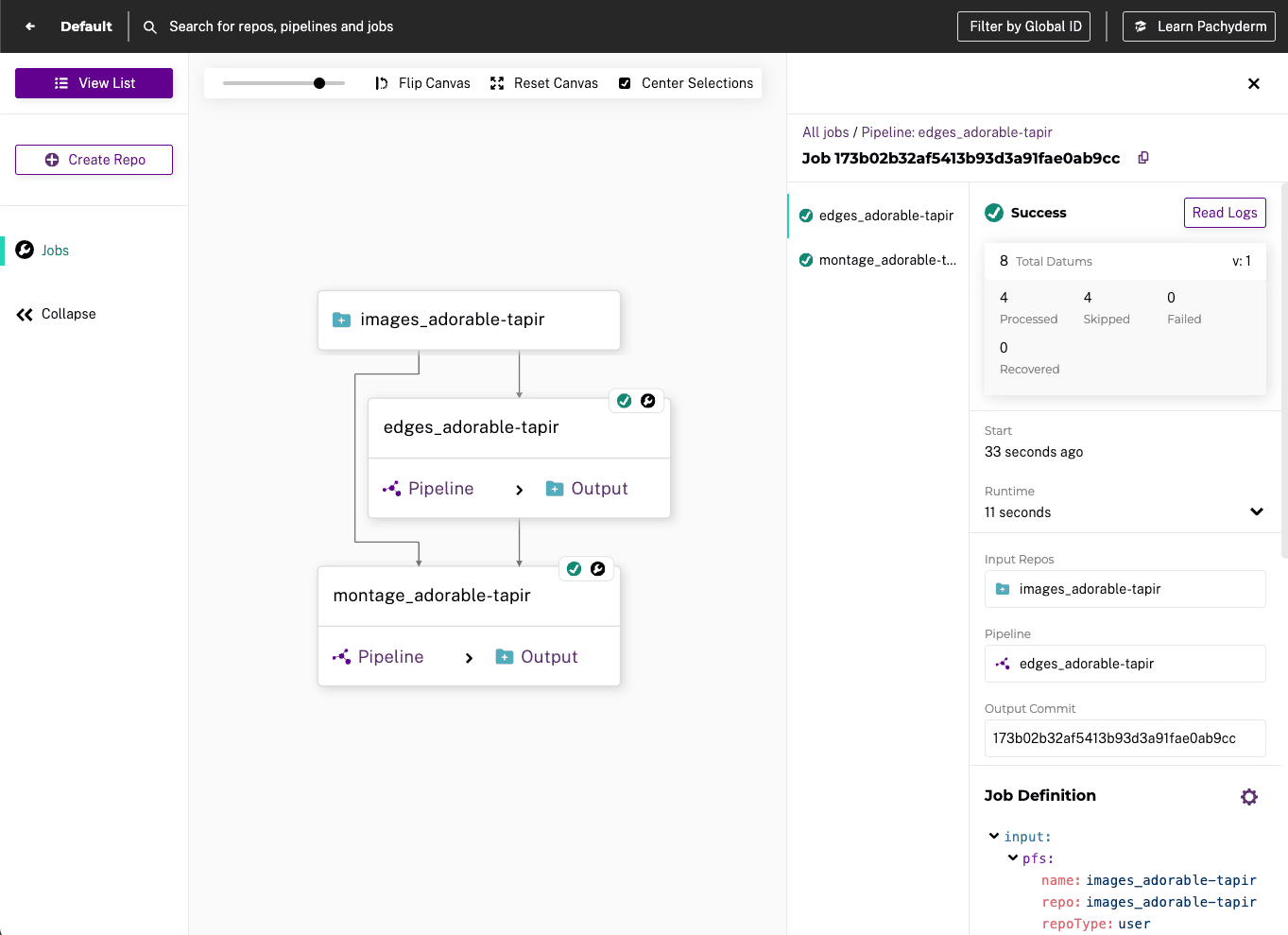

Reproducibility

Ensure reproducibility and compliance via immutable data lineage and data versioning for any type of data.

Increase team efficiency and collaboration via git-like structure of commits, branches, and data repositories.

Immutable Data Lineage

All data and pipeline code is versioned providing an immutable record for all activities and assets.

Track any result all the way back to its raw input.

Full versioning for metadata including all analysis, parameters, artifacts, models, and intermediate results.

Data Version Control

Automatic and intelligent versioning of even the largest data sets of unstructured and structured data.

Git-like structure enables effective team collaboration.

Diff between two commits of data to debug data, code, or model failures more efficiently.