This post describes a retired beta. We have updated our product to use the JupyterLab Pachyderm Mount Extension for data scientists. Learn more about the current functionality here.

Pachyderm has always been focused on delivering a robust platform for data engineers. And by doing this, we have enabled engineers from many different industries to create data-driven applications that can reliably transform and manage their data at scale.

But that’s not where data scientists spend most of their time. Jupyter Notebooks are the lingua franca for data science. The ability to quickly prototype code alongside explanations, immediately visualize the results, and document the process all in one place gives notebooks a leg up on almost all development environments.

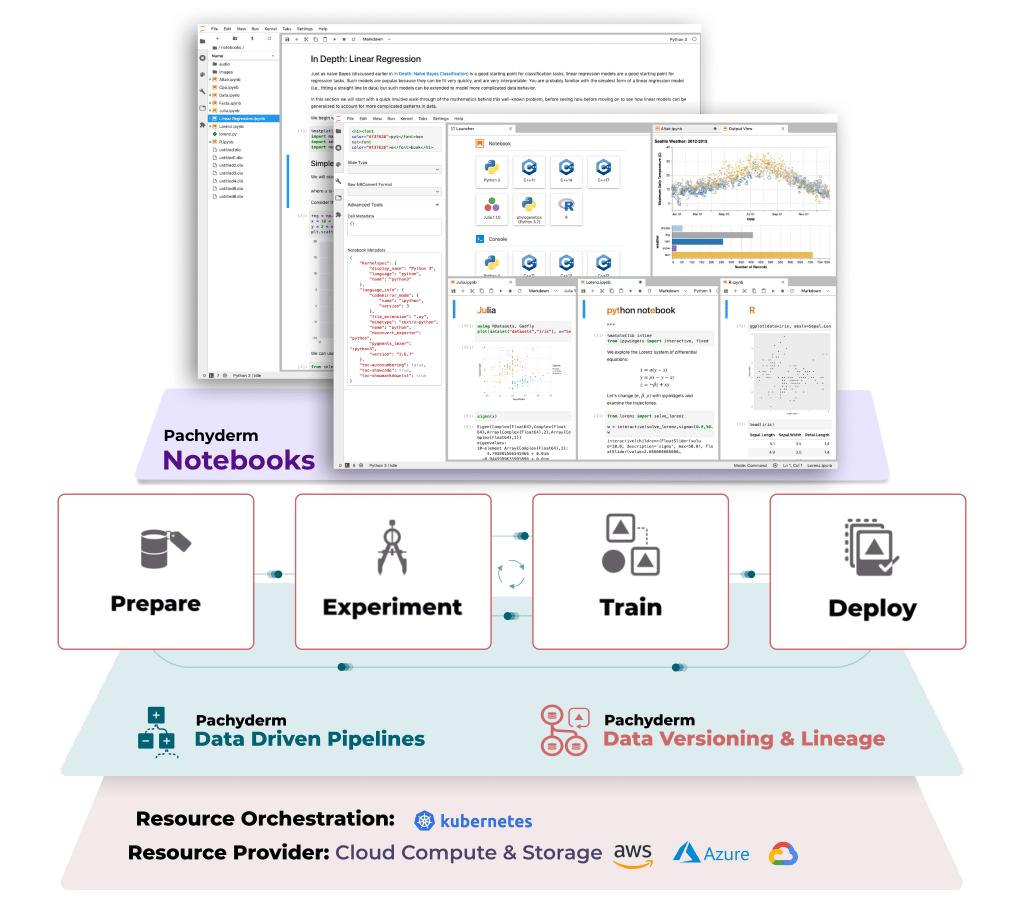

We wanted a way to unite the two pillars of data science, bringing together our powerful data engineering capabilities with a familiar interface that data scientists understand and work with every day. That’s why we’re introducing the beta version of Pachyderm Notebooks in Pachyderm 2.0 with the goal of bringing you one step closer to the ideal ML development experience.

- Data-centered development

- Striking the right balance

- Pachyderm Notebooks

- This is just the beginning!

Data-centered development

At Pachyderm, we believe a data-centric mentality is fundamental to data science. Data-centric means that data is just as valuable and dynamic as the software we use. You can’t treat data as an afterthought and hope to build a model that powers a production app. That’s because in machine learning, any model is nothing but a snapshot. It contains our understanding of the problem, the learning techniques used, and the data available, but it’s still a frozen point in time.

Just as you’d never expect to have a software release with zero bugs, you can’t expect your model to have zero bugs. Even bias or gaps in your data can and should be considered bugs. And to fix them and continually improve our models, we must make iterative updates to our understanding, our techniques, and our data. Those iterations are the key to a cleaner, more powerful dataset and, subsequently, a better model.

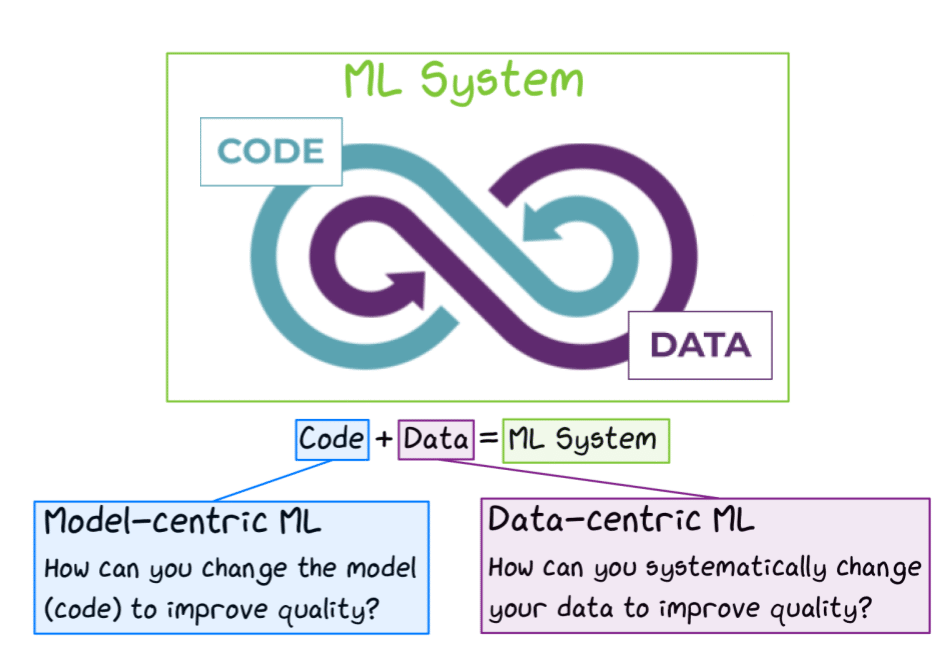

Ultimately, a machine learning system incorporates both model-centric and data-centric development lifecycles. At Pachyderm, we don’t believe that these are at odds with each other, but rather complementary if implemented correctly. We need to be able to iterate on both, code and data, without introducing overhead or losing anything in the process.

Figure: Model-centric vs. Data-centric development. Adapted from Andrew Ng on MLOps and Completing the Machine Learning Loop (Image by Author)

While Pachyderm provides the platform to enable both lifecycles, the key to iteration is notebooks. They’re the convergence point for data engineering and data science. Notebooks are also where we tell the story of that data and that model. Why was the data transformed in this way? What conclusions can be drawn from this experiment? What should we try next?

Notebooks enable us to develop freely with many benefits:

- Code gets segmented into cells for selective execution and modification

- Documentation can live alongside our code as we write it

- Multiple languages are supported in one place

- Visualizations are easy and crucial to helping us understand data and models

- Notebooks can run on cloud resources and connect to them from anywhere

- It’s easy to export and share notebooks and results with others on our team

Together, these features (and many more) make notebooks one of the most efficient ways to work with data and adapt as needed. And since a data scientist can spend more than 60% of their time performing exploratory data analysis (EDA), they’re a game changer.

Striking the right balance

But what’s good for exploration is not always good for production. As popular as notebooks are for data scientists, they are not highly respected in the software engineering communities, with some practitioners avoiding them at all costs. The big problem is notebooks are easy to work with but really hard to manage.

The freeform nature of notebooks makes it easy to produce something messy and unreliable. Many traditional software programming IDEs enforce specific coding practices, but with notebooks it’s left up to you to practice proper coding hygiene.

The pros and cons of notebooks and IDEs is hardly a new topic. This culture war has been raging between engineers, data scientists, and machine learning experts for some time now (even getting personal on occasion) mirroring the Editor War in the machine learning ecosystem.

But this struggle goes both ways. Data and code are two variables in the equation we need to balance – with data being a relatively new variable. If we focus our efforts on deployable code, our policies on data must be quite rigid. That makes our ability to iterate, explore and understand our data limited, which makes it a lot harder to produce useful models.

We need to navigate the space between the needs of data engineers and data scientists.

Pachyderm Notebooks

Introducing notebooks to data-centric development requires striking the right balance between reliability and agility. We want to use notebooks for all the benefits that they provide while also reducing bad habits, easing our path to production. In its first iteration, Notebooks should make it easy to get started and facilitate easy interaction with Pachyderm.

Let’s first take a look at some of the features that are already incorporated into this release of Pachyderm Notebooks.

No Setup

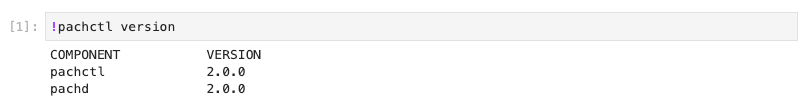

The Pachyderm client (pachctl) and python-pachyderm are installed and configured by default along with our examples and starter notebooks, so you can begin learning and experimenting with Pachyderm right away.

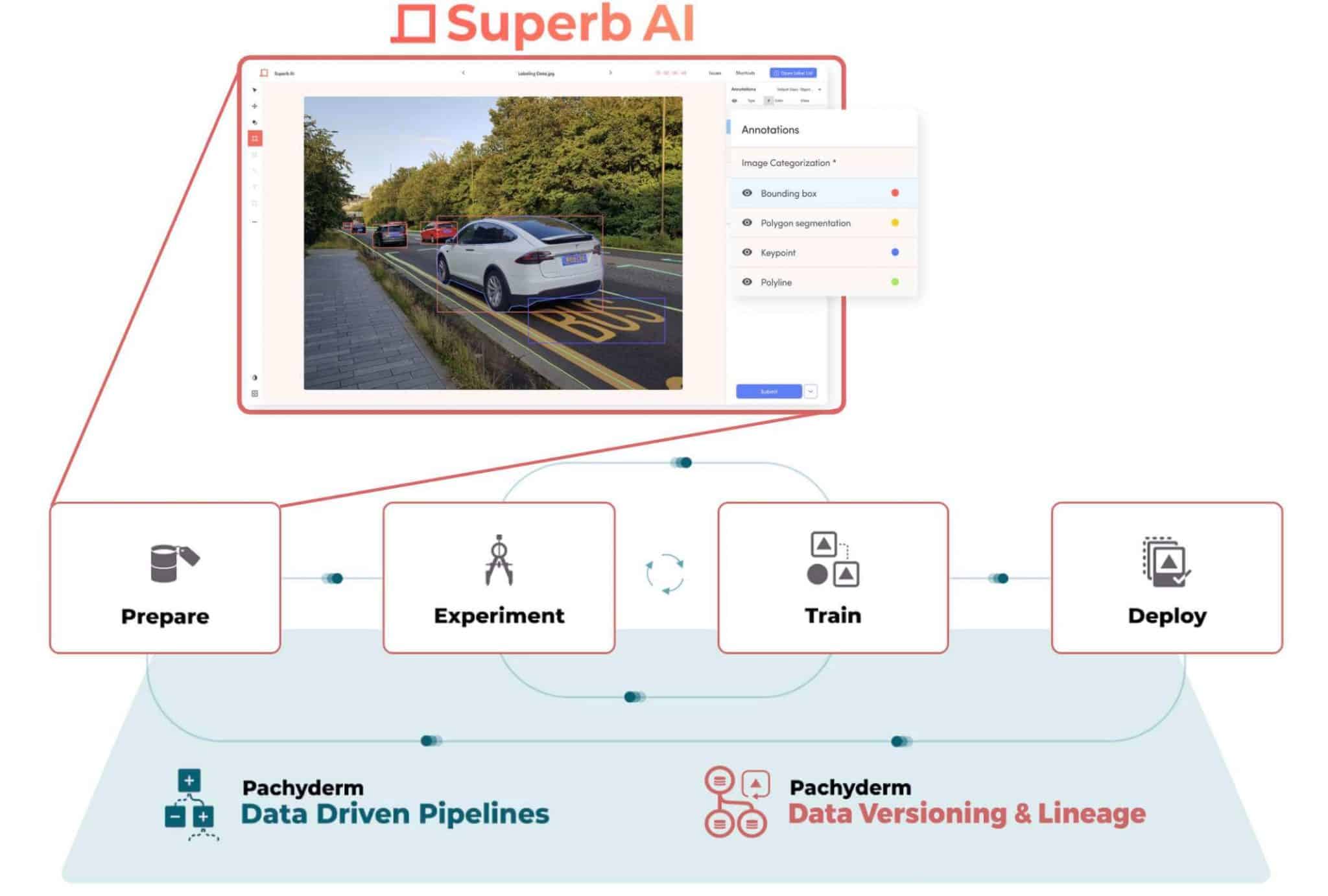

Unify Data Engineers and Data Scientists

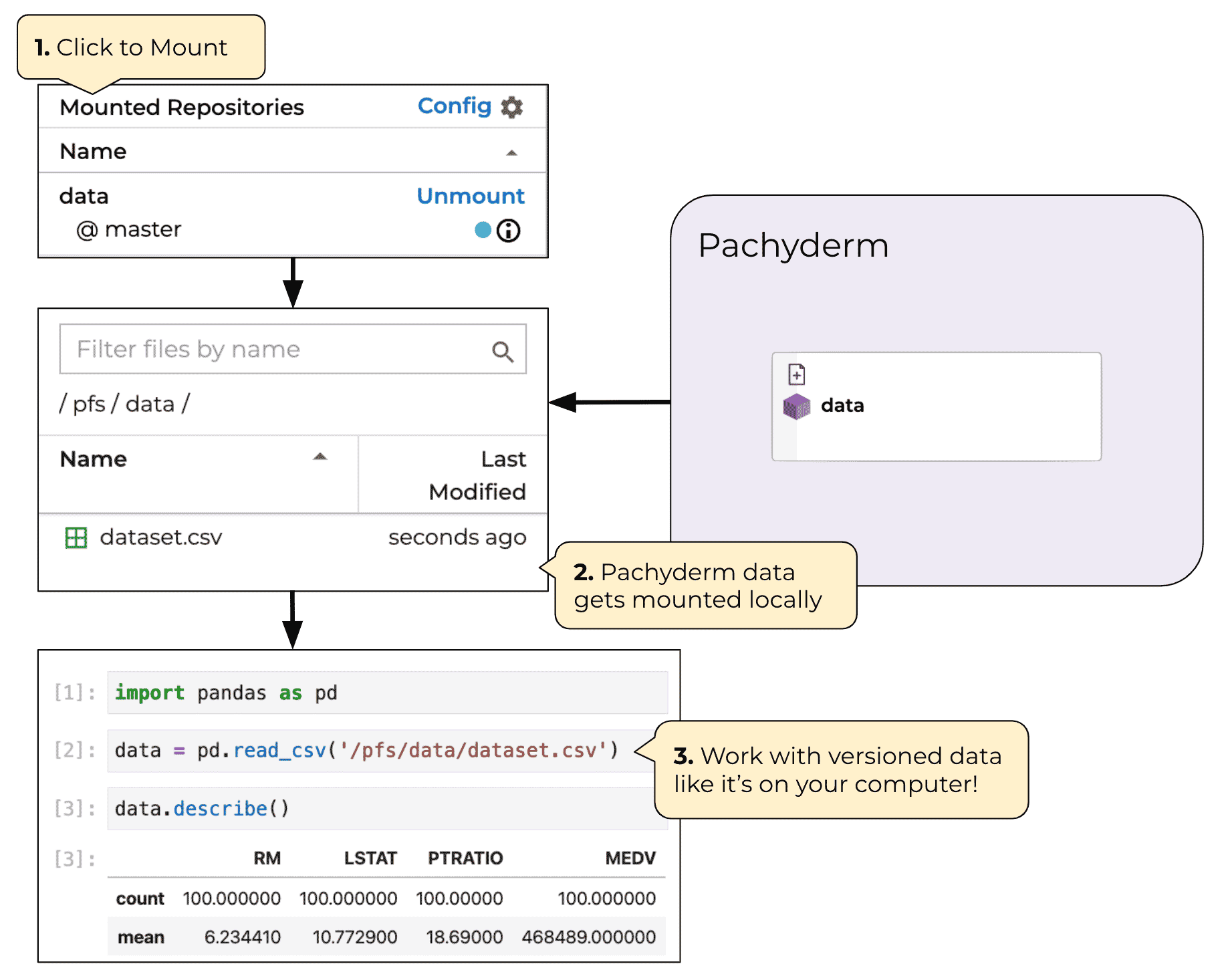

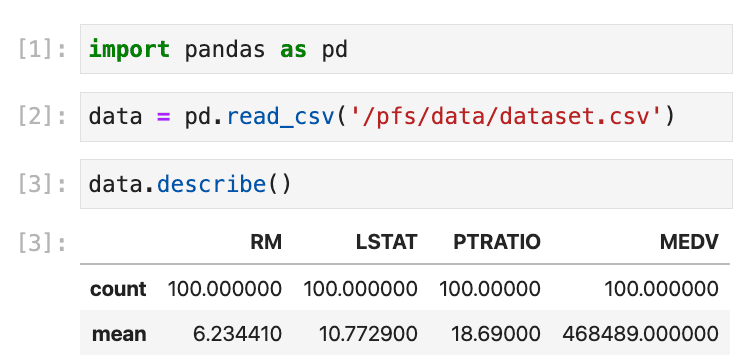

Having Notebooks deployed right alongside a production environment allows data scientists to safely connect to the most current data for their experiments. You can mount commits directly from Pachyderm’s versioned data repositories right into your Notebook environment as if they were local files. This enables a data scientist to experiment with one version of a dataset while data engineers or data labelers are curating the next version.

For now, you can mount data directly into the notebook environment by using the fuse mount.

$ pachctl mount -r data@v0.1 /pfs/

$ tree /pfs

/pfs

└── data

└── dataset.csv

Mounting data into Notebooks becomes even more powerful when you begin to build pipelines. Pachyderm pipelines apply code to files from versioned data repos. This means that the code you write in your notebook will function exactly the same when it’s packaged into a Pachyderm pipeline! Prototype your code against the same Pachyderm data it will see at scale.

Low cost

Notebook users typically forget to shut down their servers manually when they’re finished with their experiments. Therefore, it’s frequently a good idea to automatically shut down servers that are idle. Pachyderm Notebooks use the default culling pattern in JupyterHub, to determine when a notebook server is idle.

When a user returns to Notebooks after the server has shut down, they will have to start their server again. Work in opened notebooks are regularly saved in a .ipynb_checkpoints directory, but the in memory state of the previous session will be inaccessible.

Secure

This is just the beginning!

At its core, Pachyderm provides all the rigor necessary to be the foundation for data. With the release of Pachyderm Notebooks, we want to build on this foundation. We want to give users the freedom to experiment, explore, and interact with this foundation – to work with data to understand it, to solve problems, and give you the ability to scale it at the click of a button. No platform to date has fully been able to accomplish this vision, yet this is our aspiration. And Pachyderm Notebooks marks the beginning of our journey to bring that vision to reality.

This beta release of Notebooks is just the start. We’ve been hiring and working on some really exciting features to take your development experience to the next level. Stay tuned for even more features soon.

Interested in working with us on Pachyderm Notebooks? Reach out to us on Slack and find out how you can get involved.