Sentiment Analysis

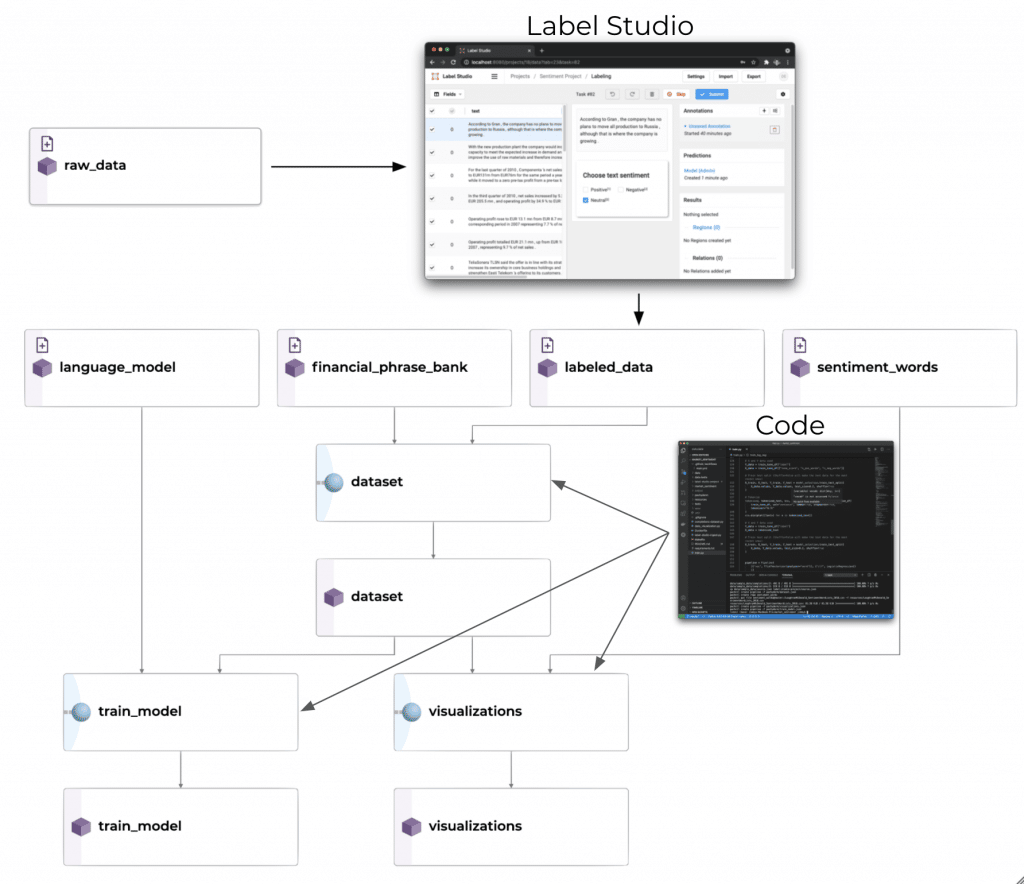

In the example below, we show a market sentiment NLP implementation in Pachyderm. In it, we use transfer learning to fine-tune a BERT language model to classify text for financial sentiment.

It shows how to combine inputs from separate sources, incorporates data labeling, model training, and data visualization.

FinBERT is a pre-trained NLP model that is adapted to analyze the sentiment of financial text. The original BERT-based language model was trained with a large corpus of Reuters and training code