Optimizing Datasets provides bigger benefits for most teams

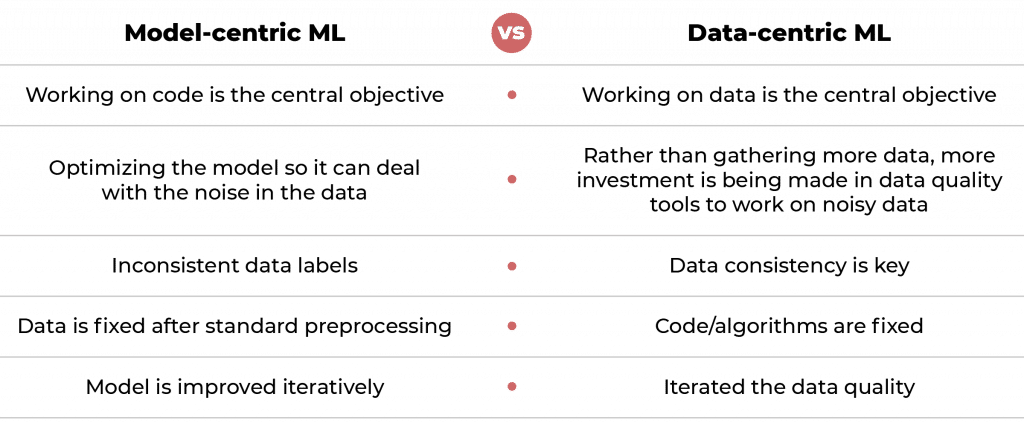

Over the last decade, AI/ML researchers have focused on code and algorithms first and foremost.

The data was usually only imported once and generally left fixed or frozen. If there were problems with noisy data or bad labels they'd usually work to overcome it in the code.

Because so much time was spent working on the algorithms, they're largely a solved problem for many use cases like image recognition and text translation.

Swapping them out for a new algorithm often makes little to no difference.

Data-Centric AI flips that on its head and says we should go back and fix the data itself. Clean up the noise. Augment the data set to deal with it. Re-label so it’s more consistent.

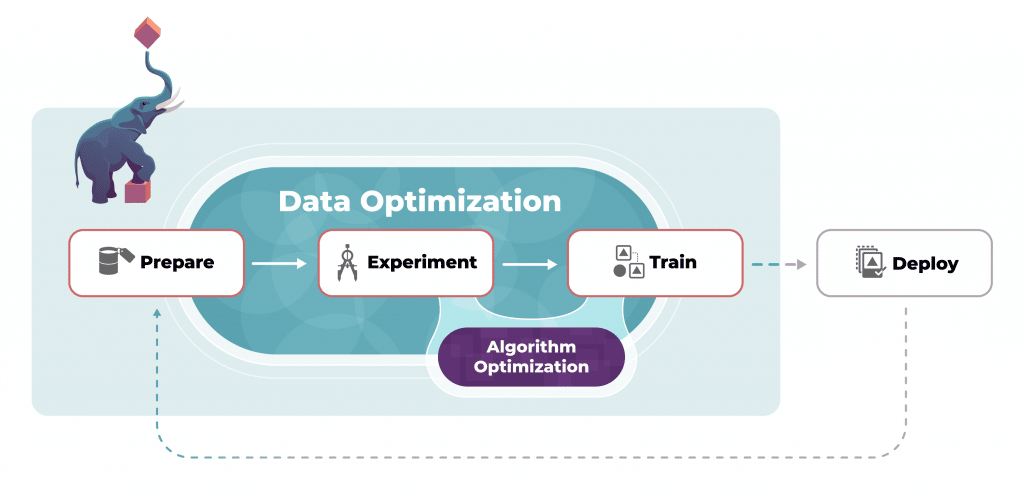

There are six essential ingredients needed to put a data centric AI into practice in your organization: