This article was originally published on Medium and is reposted here with permission.

It’s a well established truth that naming and caching are the hardest problems in computer science. So are off-by-one errors, but we will only support you emotionally with those 🍫.

To solve these problems for data science at scale, Pachyderm exposes an elegant naming system to access the lineage of your data, and a clever caching model that forms the basis of incremental data processing.

Pachyderm is an unstructured data storage and workflow engine that scales complex data workflows. It does so while versioning all input, intermediate and output data, along with the lineage relationships between said data. This means that you can easily audit and debug your data workflows by exploring each step’s inputs and output. All this translates to the Holy Trinity of Data Engineering: scalable, debuggable, and reproducible data processing.

Today we explore the naming system. Let’s rumble 🤙.

Let’s start with the basics. Pachyderm exposes the data primitives: repos, branches, commits, and files. For those familiar with git, these should ring a bell. Let’s look at a practical example to see how this git-like model mixes with data processing.

Okay, so say you grew up obsessed with watching cooking shows. Not all your friends approved. Anyway, life flies by. You’re all grown up now and all the best recipes are published across the internet. Naturally, you start by writing a web crawler to collect recipes and dump them into a Pachyderm repo called recipes. Each day your web crawler runs and creates a new commit containing all the goodies it found. Now you can go back and inspect the web crawler’s results from any given day. To get the most recent recipe dump, you run: pachctl list file recipes@master. And to get yesterday’s, you run pachctl list file recipes@master^. So far we have git for data. Groovy right?

In case you’re curious, Pachyderm de-duplicates data between commits. So if you get a lot of the same recipes each day, you won’t be paying the cost of storing a new copy in each commit. But that’s a story for another day.

At this point it only makes sense to aggregate recipes by chef and cuisine so that you can easily skim a report of the hottest new recipes while sipping your morning joe ☕.

To bridge data versioning into the world of data processing, you read up on Pachyderm pipelines. As it turns out, a pipeline consumes data from repos, transforms it, and dumps the results into a brand new repo. Exactly what you were looking for! So when you want to aggregate your recipes, you define a pipeline that is fed data about recipes and authors, and processes it using some code you whipped up🍦.

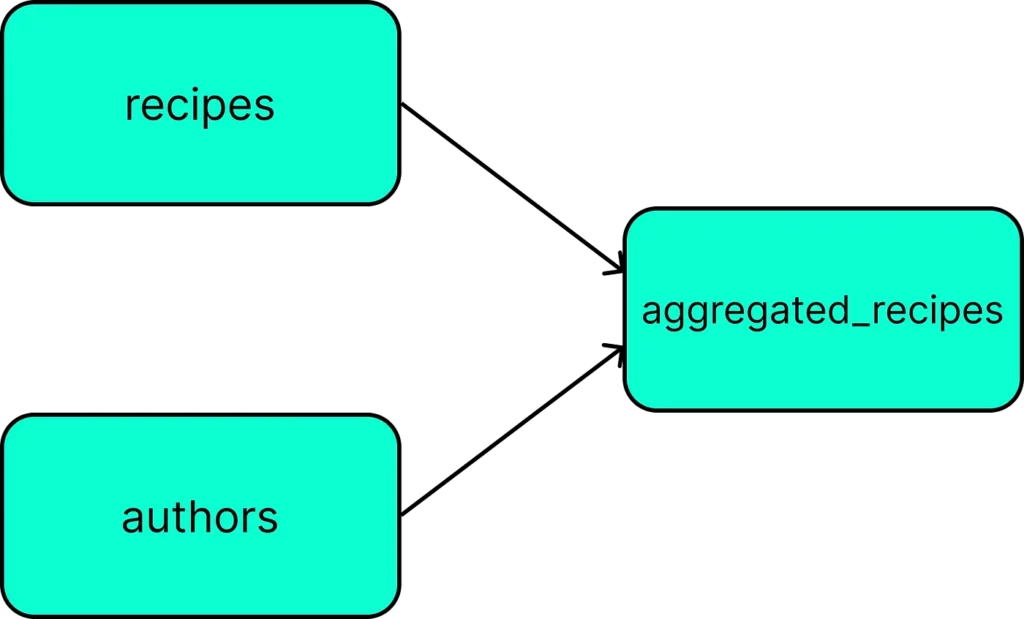

Putting it all together, you create a second repo called authors containing all of your favorite creators. To aggregate the recipes by author, you define a Pachyderm pipeline called aggregated_recipes, that runs some code you wrote which simply takes recipe files, author files, and aggregates them. Each time data is updated in either the recipes or authors repo, i.e. gets a new commit, Pachyderm executes your code in the aggregated_recipes pipeline and saves the result in a backing repo called… you guessed it… aggregated_recipes. Sweet, we have our first directed acyclic graph (DAG). DAG is common jargon for a full workflow containing one or more pipelines.

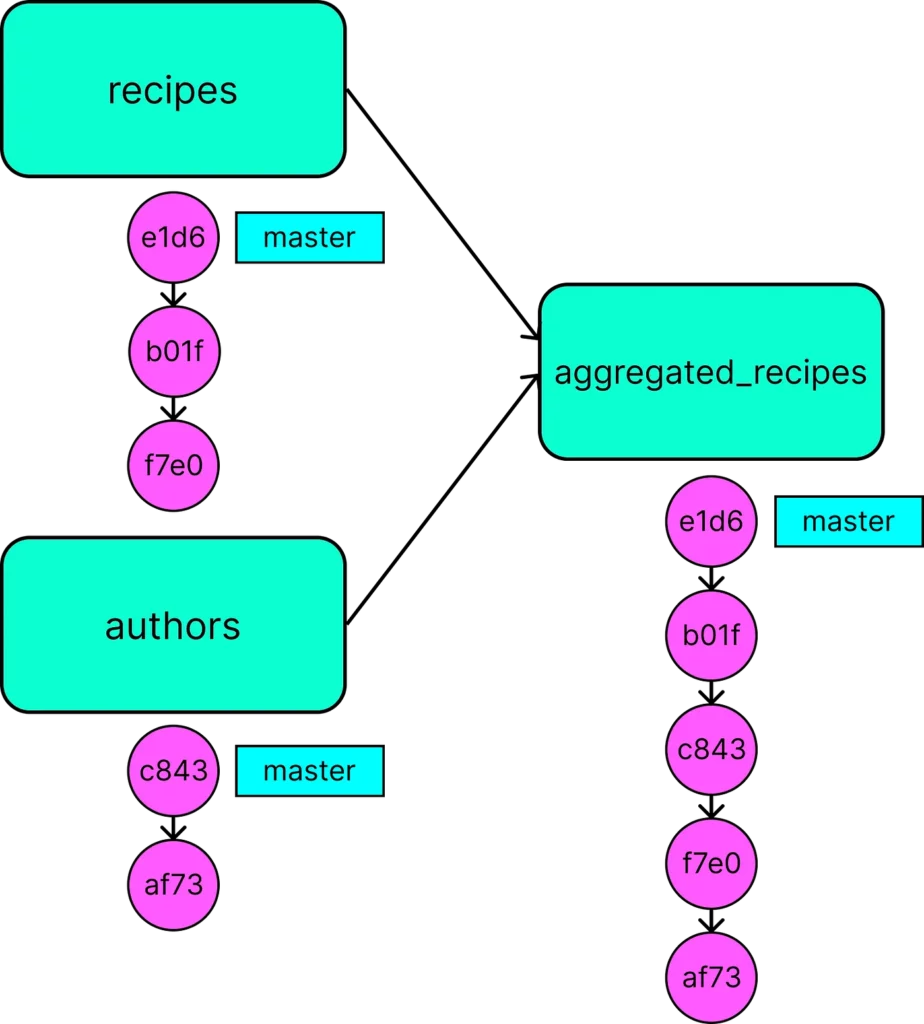

Now imagine that you’re being served up your daily recipe scrape today. Pachyderm creates a new commit on recipes@master with a UUID that captures today’s recipe data. So today the recipes@master branch may resolve to the commit recipes@e1d6. Pachyderm then executes the aggregated_recipes pipeline and dumps its results into a new commit aggregated_recipes@e1d6. Here we call e1d6 a Global ID because the same ID will be used to name the commit on each repo participating in the DAG.

After several data updates to our repos, our DAG might have the following commit structure:

If ye hath a name, ye shall be called.

So how does this relate to data lineage? When we talk lineage, we are really trying to answer questions like “what is the data and code that produced these results?”. If for instance, you saw something that didn’t look right in today’s aggregated_recipes results, you might want to inspect the data used to produce those results. Pachyderm’s naming system supports these queries naturally.

To inspect the lineage just call the upstream input with the global ID, i.e. recipes@e1d6. It feels natural (even obvious) for aggregated_recipes@e1d6 to take the input recipes@e1d6.

Brilliant.

The entire lineage of your workflow, no matter how complicated, can be accessed simply by taking the ID of a commit you’re interested in and referencing any repo with that ID. Let that sink in.

You may have noticed that the authors repo doesn’t have an e1d6 commit. You might also know that elephants never forget. Pachyderm remembers all the commits that fed into aggregated_recipes@e1d6 and can resolve authors@e1d6 to authors@c843, the real authors commit that was used to produce aggregated_recipes@e1d6. Pretty slick, huh?

If you made it this far, raise your trunk and sound your trumpet! 🐘 🎺

So how does Pachyderm represent all this under the hood? Each time a commit is submitted to the system, Pachyderm uses the current DAG definition (materialized internally by all of the individual pipeline definitions) to add new commits to all of the pipelines that are downstream of the repo with the new commit. As Pachyderm orchestrates the processing of the workflow it will populate these commits and close them. When initially creating each of these “downstream” commits, Pachyderm will store pointers to all of the input commits that can later be used to trace back a commit’s lineage.

How does this work in the context of our example? When the recipes@e1d6 commit is created, Pachyderm detects all “downstream” repos, in this case just aggregated_recipes, and creates the commit aggregated_recipes@e1d6. When this “downstream” commit is created, Pachyderm internally records all of its input commits, i.e. recipes@e1d6 and authors@c843. When a user later requests to access authors@e1d6, Pachyderm first looks to see if there’s a real commit by that name. Since such a commit doesn’t exist, Pachyderm performs a graph traversal up the lineage links to find whether a commit from authors can be found along some path. To resolve authors@e1d6, Pachyderm traverses the lineage edges starting with the real e1d6 commits and discovers the commit authors@c843 is an input to aggregated_recipes@e1d6.

Knowledge is power, and so are names

As Pachyderm demonstrates, when complicated lookup problems can be reframed in terms of naming, an otherwise convoluted system becomes elegant.

Thanks for reading ❤️