When you think of machine learning’s biggest breakthroughs in the last decade what comes to mind?

- AlexNet dominating ImageNet in 2012 and unleashing the era of deep learning?

- Self-driving cars navigating the complex and chaotic streets of a big city?

- Massive transformers with a trillion parameters?

- Scanners recognizing your face when you go through the airport?

- GANs generating photorealistic people and faces?

- A bot recognizing what you said when you call a help line?

What do all of those breakthroughs have in common?

They’re all built on the back of unstructured data.

Unstructured data is the unsung hero of machine learning.

What’s unstructured data? Think video, audio, images, and big chunks of text.

It’s anything that doesn’t neatly fit into a database or a spreadsheet in neat rows and columns. It’s the messy kind of data that humans generate as easily as we breathe. It’s Instagram posts, the music on Spotify, satellite images of chaotic weather systems, Wikipedia posts, your voice as you ask your phone for directions, movies and TV streams, all those doctor’s notes and CT scans, emails and PDFs and more.

No doubt we’ve had some amazing breakthroughs with structured and semi-structured data too, like AlphaGo beating the world’s best Go player 4-1. A board game can be represented simply as structured data, a series of positions and moves. But more complex games, like DOTA 2, mastered by OpenAI 5, a real time strategy game, are whirlwinds of unstructured data coming fast and furious.

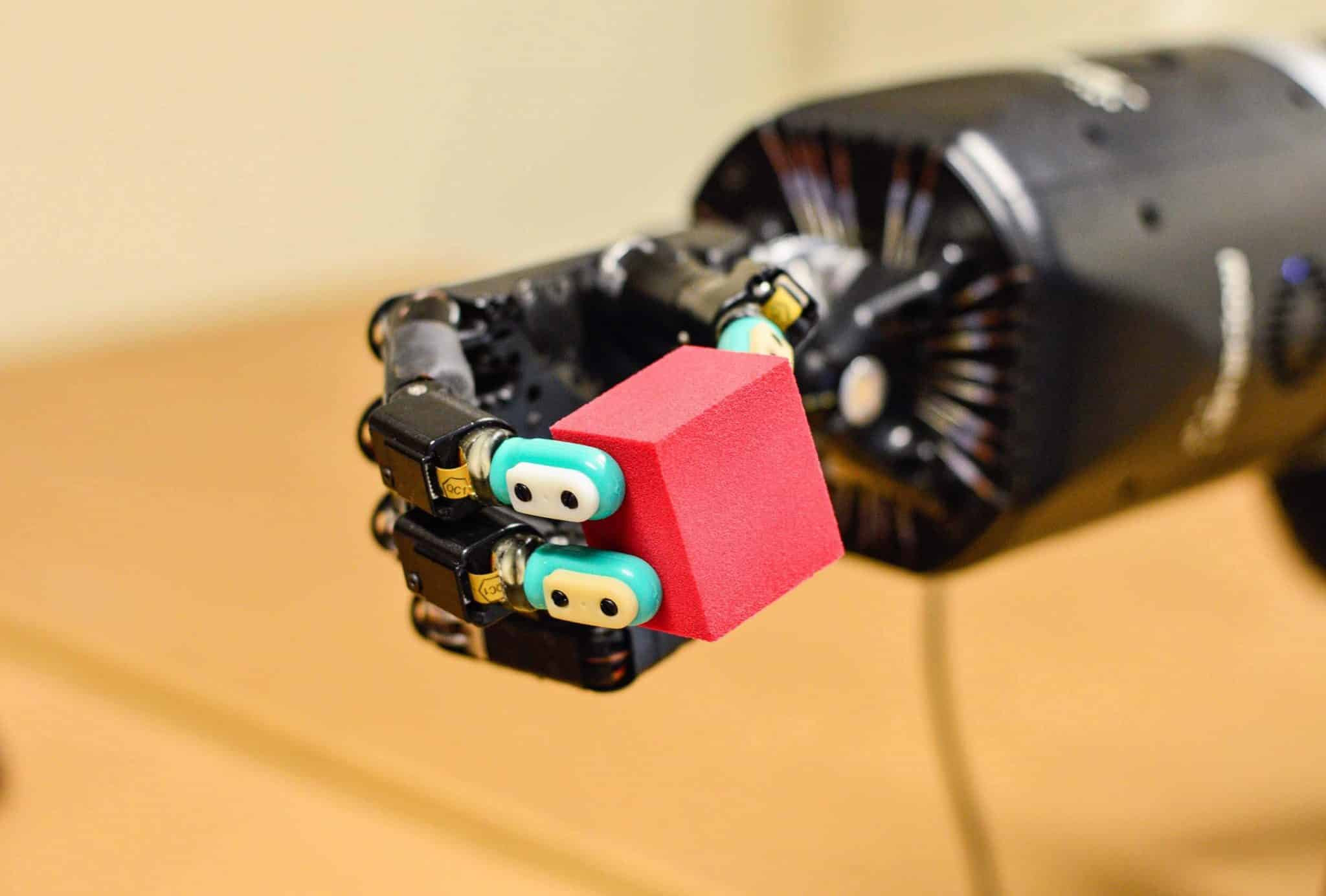

The biggest breakthroughs of machine learning in the past decade, from computer vision to NLP, came from understanding the world through that messy data of real life. It’s unstructured data that’s powering IBM’s autonomous boat across the high seas or MIT’s boat through the challenging canals of Amsterdam. It’s computer vision that’s recognizing you in your Google photos, powering that Tesla along a highway hands free and guiding robotaxis through the streets of an urban village in China, packed with people. It’s NLP speech recognition that understands what you said when you call a help line.

Structured data drove the explosive growth of the web, along with the hand-coded logic of brilliant programmers. But it’s unstructured data and the auto generated logic of machine learning algorithms that’s powering the next generation of the web, apps and beyond.

It’s just in time too because 80% of the data in enterprises is unstructured and we’ve left it untapped for decades.

Why has unstructured data been so challenging for machine learning?

Because it took the rise of machine learning for us to learn how to tap into that data in the first place.

We could take pictures of the Earth from space with satellites but we couldn’t write hand-coded logic to recognize roads and buildings in that image. We could record a call, but we couldn’t understand what someone was saying when they called into a help line.

That’s because there are limitations to what hand-coded logic can do. When a programmer writes a program, they’re coming up with all the possibilities of what can happen when you click and drag or type a command. They plan out all the steps and write the code to make it possible. If this happens then do this step, else do that step. That got us to where we are today because we can write hand coded logic for so many tasks, like operating systems and drawing text your screen and uploading photos to Instagram.

But there are some tasks where we just can’t figure the hand-written logic. You can’t tell a computer how to recognize a cat in an image. You can say “well it has fur and whiskers and paws” but what are whiskers and paws and fur to a computer?

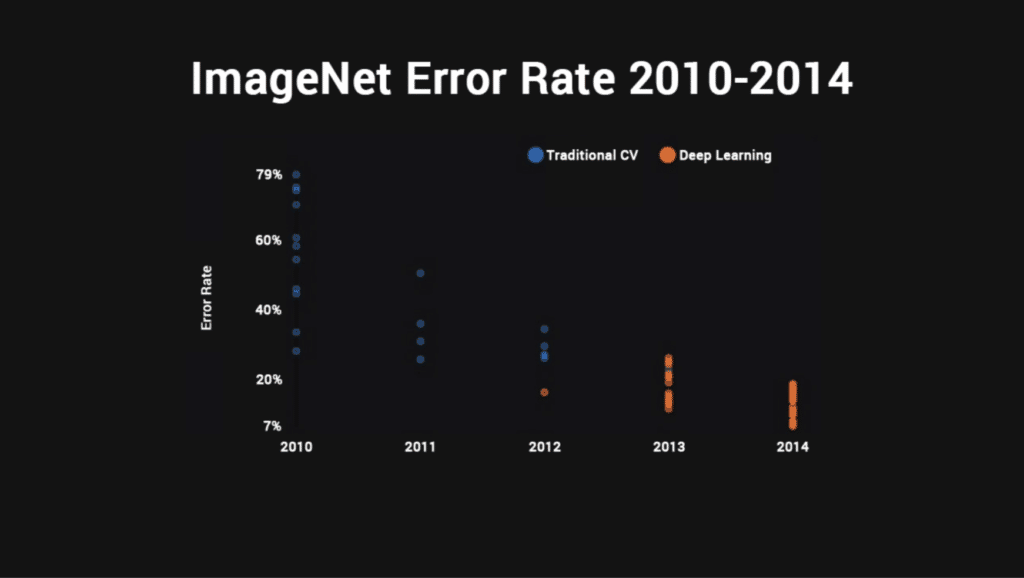

Luckily, we can let the computer learn its own rules for recognizing a cat in images. AlexNet was a breakthrough because it was the first time anyone successfully approached human level object recognition in a series of images. In 2011, the best error rate was a pathetic 26% with the worst error rate a horrific 79%!

AlexNet dropped it to 15%.

Within a year, everyone was using neural nets and the rates halved again to 7%.

A year later the algorithms had surpassed human level performance and they closed the competition.

Since then we’ve seen a rapid rise in AI capabilities, as the big tech companies and research institutes around the world pushed the boundaries of what’s possible again and again.

But we’re still struggling to deal with unstructured data. Most of the tools for machine learning are built to deal with structured data.

Why has machine learning focused on structured data, when unstructured data is so powerful?

If 80% of the data is unstructured, why are 80% of the tools built to handle only structured data?

Because it’s easier.

We’ve been dealing with structured data for a long time. We know how to handle it. It fits neatly into the rows and columns of a spreadsheet or the tables of a classical database. Databases have formed the backend of software for decades and they’ve helped us grow to our current level of technological advancement. We’ve learned how to scale them and distribute them across clusters. We’ve gone from scale up, single machine databases, like DB2 and Oracle, to clustered databases, to distributed scale out databases like Snowflake and Amazon’s RedShift.

Tools like DataBrick’s Spark were built before the AlexNet breakthrough to deal with structured data at scale. And clearly, there are a lot of machine learning use cases that use structured data, like churn prediction in shopping carts and predicting what movie you want to watch next on Netflix or even stock trading.

But tapping into the other 80% of data is where all tomorrow’s breakthroughs come from, whether that’s drones spotting crops under attack from pests, or automatically syncing lips in video to dubbed voiceovers in movies, or translating language in real time when you’re in France and nobody speaks a word of English, or generating photorealistic models for catalogues when hiring enough models to show every piece of clothing in your catalog would be prohibitively expensive.

We’re just learning how to learn from that data. It’s an entirely new branch on the tree of software development. We don’t have 40 years of history to draw from and that makes it harder.

Historically, we’ve stored unstructured data in shared file systems like NFS or more recently in object stores like AWS S3. We’ve come to call object stores data lakes. That’s where companies store most of their unstructured data in its native format, whether it’s an MPEG or a JPEG or a text file with a novel or a financial report. So it seems natural that we should just store it in a data lake and call it a day.

But building machine learning pipelines that pull from data lakes can be problematic. Databases have the advantage of giving us lightning fast lookups. If you put ten million little JPEGs in a filesystem, performance can degrade fast as you try to query them or look them up. The more files you throw at it, the bigger the problem gets as the file system tries to index them and find them. If you’re trying to train your machine learning model on 50 million images you’ve got to be able to move through them quickly and the lookup limitations of a traditional file system just won’t cut it. It takes 55ms to stat a file. If you have a billion files in your file system, it will take 58 days just to get an understanding of what you have.

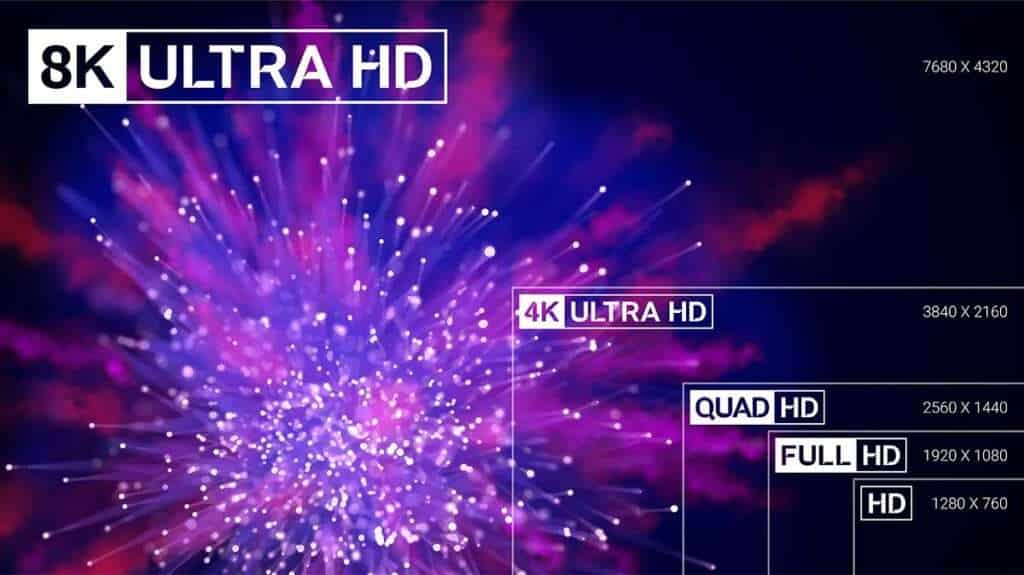

Some engineering teams tried to solve that problem by shoving those files into a database. That’s the approach Databricks took with their Lakehouse architecture. It puts unstructured data into parquet files. But if you look closely at all their examples, you see they’re 90% structured and semi-structured examples. There are no high resolution images or video files in their examples. That’s because putting unstructured files into a database can work if they’re small and manageable, like semi-structured JSON files or Python code, but it starts to fall apart quickly when you’re pushing 500GB high resolution satellite images into a database, or MP4 audio files, or 4K video or anything else that’s big and unwieldy. Writes get terribly slow and you have to slice those images up into smaller files just to put them into the DB in the first place. Reads are slow too as they try to fetch that data back and reassemble it in a format not designed to handle that kind of data.

We need a new architecture to handle the explosion of unstructured data and turn it into brilliant new insights with machine learning.

And it requires a fundamental rethink of how we store and access that data.

How to Build Machine Learning Infrastructure for Unstructured Data from the Ground Up

If you’re still struggling with unstructured data, it’s probably because you’ve got a platform built for structured data. Unstructured data was an afterthought.

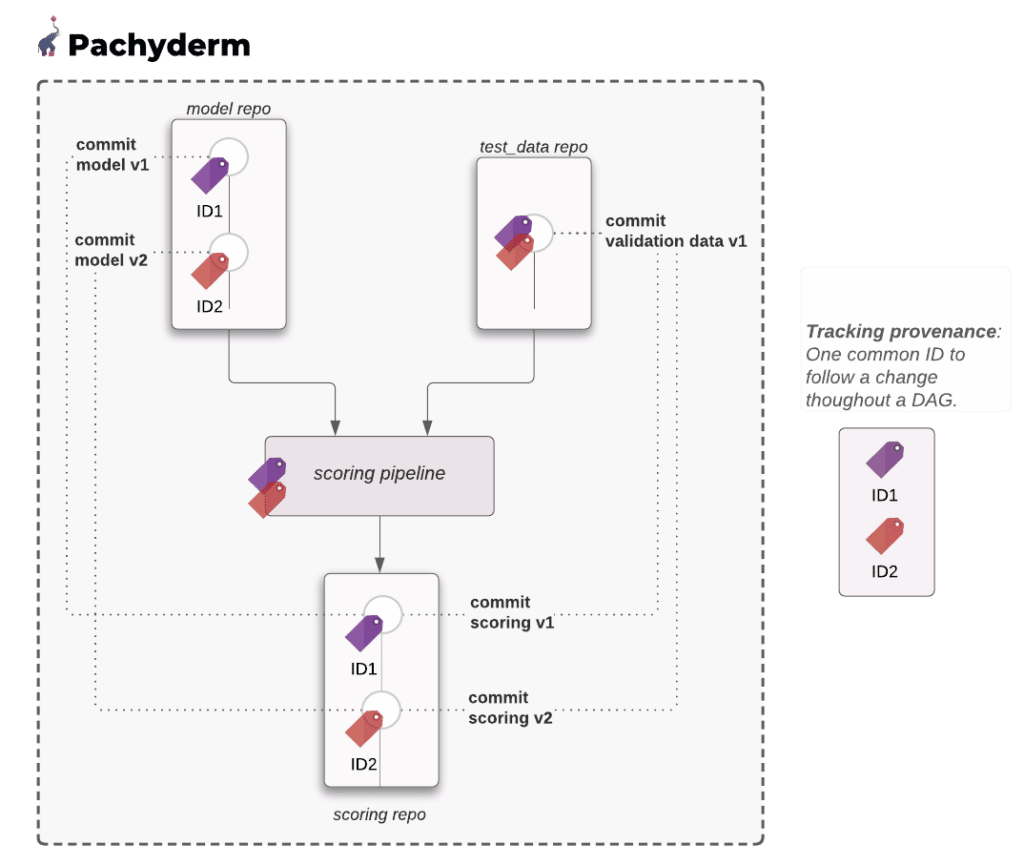

At Pachyderm we solved the problem by using a hybrid approach that combines object storage, a copy-on-write style filesystem for snapshotting and a Git style database that indexes everything.

That hybrid approach takes advantage of the nearly unlimited scalability of virtual filesystems on the cloud, like S3, that deliver wire speed performance to any app, no matter where it lives on that cloud. We use the object store but we abstract it away from the user with the Pachyderm filesystem.

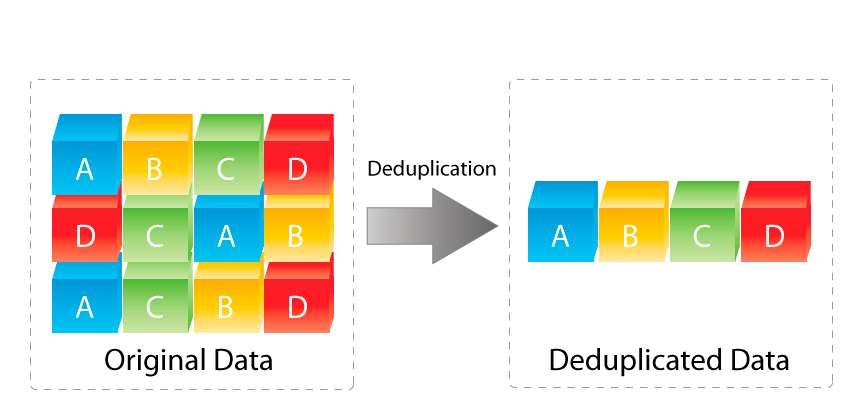

Why add our own filesystem? Simple, because it deduplicates the data, intelligently chunks it and reshuffles it as needed. That allows for lightning fast versioning and snapshots.

The database backend, running in Postgres, knows where everything lives and it understands how the code, data and model are all connected in that snapshot. It’s a super-index, which takes advantage of what databases do best, fast lookups, while leaving unstructured data where it works best, in an object store. You don’t have to change the format of the files or alter them in any way or come up with a structured wrapper of metadata to hold it.

Of course, it’s one thing to take a snapshot but if you don’t know what’s in that snapshot it’s not very helpful. Pachyderm uses a Git like approach to track the lineage of what lives in those snapshots, how the data changed and how it relates to everything else as you move from ingestion to production model.

The biggest advantage of today’s cloud storage is that for all intents and purposes it’s virtually infinite. In old copy-on-write filesystems, like ZFS, you were limited by how much storage you had and it was all very manual. If you were an administrator, you took the snapshot before you did something important, just in case anything went wrong. You knew what was in that snapshot because you made it and it was transient. You did the updates and if everything went well, you merged or deleted the snapshot. But in a system that can keep thousands of versions of your data, models and code, you can’t expect to remember what’s in all of them days or months later.

Give it a try with a 30-day Pachyderm Enterprise Trial Key

In Pachyderm the versioning and snapshotting happens automatically and the lineage tracking happens automatically too. That means you have your snapshots and you know what’s in them.

Too many systems force a developer or data scientist to manually take snapshots. That’s a losing strategy. Anyone who’s ever managed a backup system knows you forget to take manual backups consistently and bad things have a tendency to happen when you least expect them. Suddenly, you need a backup, but your last one was days or weeks ago and you just lost all the changes in between. You want that backup system running automatically, so it’s always there when you need it.

Why Versioning is Essential with Unstructured Data

Machine learning also demands long lived versioning and snapshots. You don’t go back and delete old versions of code in Git. So why would you delete old versions of your data, other than to save on space? With today’s cheap and abundant storage, you can keep 1,000s of deduped versions of the data for years and never run up a big bill.

Yet too many platforms still see versioning of data as a short lived proposition.

DataBricks keeps versions for seven days and then deletes them all together after 30 days. They see versioning as something you only need while you’re experimenting but it’s so much more than that.

Versioning Machine Learning Models for Unstructured Data

Once a model goes into production, you may discover anomalies and edge cases months later and then you need to go back and retrain on the exact data you had, with the exact set of code and libraries you used. You may also have auditing requirements that require you to go back in time and remove some data from a dataset and then retrain from that point forward, with only the incremental changes instead of having to start all over.

Even worse, datasets have a way of changing on you and that can spell disaster when you want to train a new version of a model. One team member sorts it differently, or updates a header, or deletes a bunch of videos or changes their format. Even small changes in input can have big changes on the output. It’s the butterfly starts the typhoon in China problem.

Imagine an administrator changed the encoding on videos. You already have a model trained on the original versions of those videos and now you need to update it. The new training may produce radically different results in object detection because one lossy format may not look very different to a human eye but it can look very different to a machine’s “eyes.”

There’s also the problem that when humans try to keep track of versions, things can get ugly fast. If you make too many copies of the data, which dataset did you use to train the model and what version of Anaconda was that when you first ran it? Is it untitled-8 or untitled-10? Without lineage tracking things the whole way through, you may produce a very different model, when all you wanted was to make a few simple changes.

Data-Centric ML with Unstructured Data

Then again, you may want to change your data. Changing your data may be a perfect solution to making your model smarter.

Let’s take an example from machine learning pioneer, Andrew Ng. As Ng says in from his talk “From Model Centric to Data Centric,” you can throw a number of different algorithms at a dataset and get largely the same results. The problem is more likely the data. If you had a distributed team label that data, those people may interpret your instructions very, very differently. If you refine your instructions and have them go back and fix the bounding boxes on 30% of the files you may get a 20% performance improvement because the boxes are now more standard and uniform. But to make those changes you want to go back to the exact dataset you used and change only those files and then incrementally retrain. To do that you have to know exactly what changed and only retrain on what’s new. In a big dataset that can make all the difference in the world because you don’t want to retrain on a petabyte of data, when only 30% of it changed.

But it’s not just the data management and versioning that matters. When you have a lot of images or video or audio you can’t try to cram them all into a single computer or container on the cloud. You need a system that can intelligently scale your workload and slice up the processing into easily digestible chunks, without you having to write a lot of boilerplate code to make it happen. Pachyderm does that for you too and that’s one of the reasons so many companies turn to us to speed up the Herculean task of crunching through petabytes of unstructured data.

It’s not unusual for companies to try to work through all those files linearly, stuffing it all into the biggest container they can buy on Azure, Amazon or Google, only to have the processing take seven weeks. That’s what LogMeIn did as they processed audio data from their call centers. But with Pachyderm it automatically chopped up the data and processed it in parallel. That took them from seven weeks to seven hours.

Pachyderm leverages Kubernetes on the backend to scale the processing without you having to write a lot of filler code. It just does it automatically and cuts your processing time by an order of magnitude.

But no matter how you cut it, unstructured data in machine learning demands a different architecture, not a retrofitted architecture of the past.

Putting massive video files and imagery and audio into a database is a square peg in a round hole. It doesn’t work and it won’t scale. You need something new.

The Key to Tomorrow’s Machine Learning Powerhouses

Once you have that new architecture in place, you have the keys to unlocking a treasure trove of new insights.

As companies dig deeper into all those emails and audio files and PDFs and images and videos, they discover new AI-driven business models that simply couldn’t exist with machine learning at their core.

When we talk about AI-driven businesses, a great example is one we mentioned earlier: generating photorealistic models for online clothing catalogues. When you hire a fashion models today, you may have 5000 pieces of clothing to show, so you hire a range of models with different ethnicities and body shapes to show the broadest range of possible customers what they would look like in those fabulous clothes. But most companies won’t have the capital to show all the permutations of all those clothes on every model. With machine learning you can do exactly that, generate a perfectly realistic model for every kind of person who might visit the site and dynamically show any outfit on that model.

That business model simply can’t exist without AI and without the unstructured data it learned from to generate those photorealistic fashion icons.