Accelerate productivity by processing data 40% faster

It’s no secret that ML workloads are getting larger and larger, primarily because datasets are growing to petabytes and beyond. The driver behind this is unstructured data, which according to Datamation, makes up 80% of all data and is growing at 55 to 65% a year.

All of this means that working with large unstructured datasets for machine learning or data operations can create performance and budgetary hurdles because of the enormous time and compute required. In fact, research indicates that nearly half (48%) of all ML practitioners struggle with data processing.

Is it possible to process data faster and reduce costs?

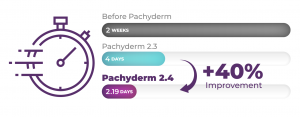

With the release of Pachyderm 2.4 you can do just that! Our tests indicate that customers can realize up to 40% performance improvement over our prior release with the potential to save tens of thousands of dollars over the course of the next year.

Here’s a look at the performance gains projected with one of our health insurance customers. Note that by using Pachyderm this particular customer saw a reduction in processing times from two weeks to roughly two days.

Our latest release leverages advanced compaction and operational improvements, enabling data engineers to tackle a wider range of data processing and ML use cases. You can expeditiously process data regardless of shape or size, whether it be small volumes of enormous files ( 1 GB or more) or small files (100 MB or smaller) in high volume (billions of records).

Accelerated processing for large quantities of small files

Pachyderm was always good at processing large files. We implemented distributed metadata operations for pre-processing and post-processing a job so that the entire pipeline or DAG completes faster. Now, multiple workers are all processing chunks of metadata in parallel, whereas previously we had just a single worker. Metadata pre-processing is used to determine which files are new or changed, thereby quickly assessing the workload and only processing the new or changed data. Metadata post-processing is used for compaction and file validation.

In 2.4, Pachyderm has added a data chunk pre-fetcher that parallelizes the downloading or ingress of data into a pipeline step. With data chunked in object storage, the pre-fetcher improves file download times for medium to small files. In addition, compaction, a process that reorganizes files for optimal read access in downstream pipeline steps, was further optimized using the Leveled compaction algorithm, allowing Pachyderm to speed up access to files in object storage.

Pachyderm 2.4 also includes other improvements that we think will be beneficial to all our customers; such as a redesigned Console and the GA of Jupyterlab Mount Extension.

Improved Console Experience

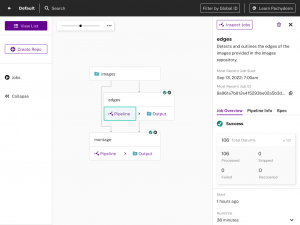

This release includes improvements to our GUI (Console) such as an improved DAG visualization for complex pipelines and side panel redesign to improve the debugging user experience.

New combo node and redesigned side panel in Pachyderm’s Console

Combo nodes in Pachyderm’s Console enable users to more easily visualize long, complex DAGs. These nodes combine both a pipeline step and an output repo into one object, reducing the number of objects in a DAG and making it easier for you to traverse all aspects of a DAG.

New screens within Console provide more granular insight into data processing status, making it easier to access the relevant logs and statistics to facilitate debugging. Both DAGs and logs are visually easier to comprehend and navigate because they surface the specific location where the error occurred without having to go through multiple screens or parse extraneous information.

Finally, a side panel info redesign presents Pachyderm metrics in a logical manner and enables easier navigation to further drill down into the system’s performance.

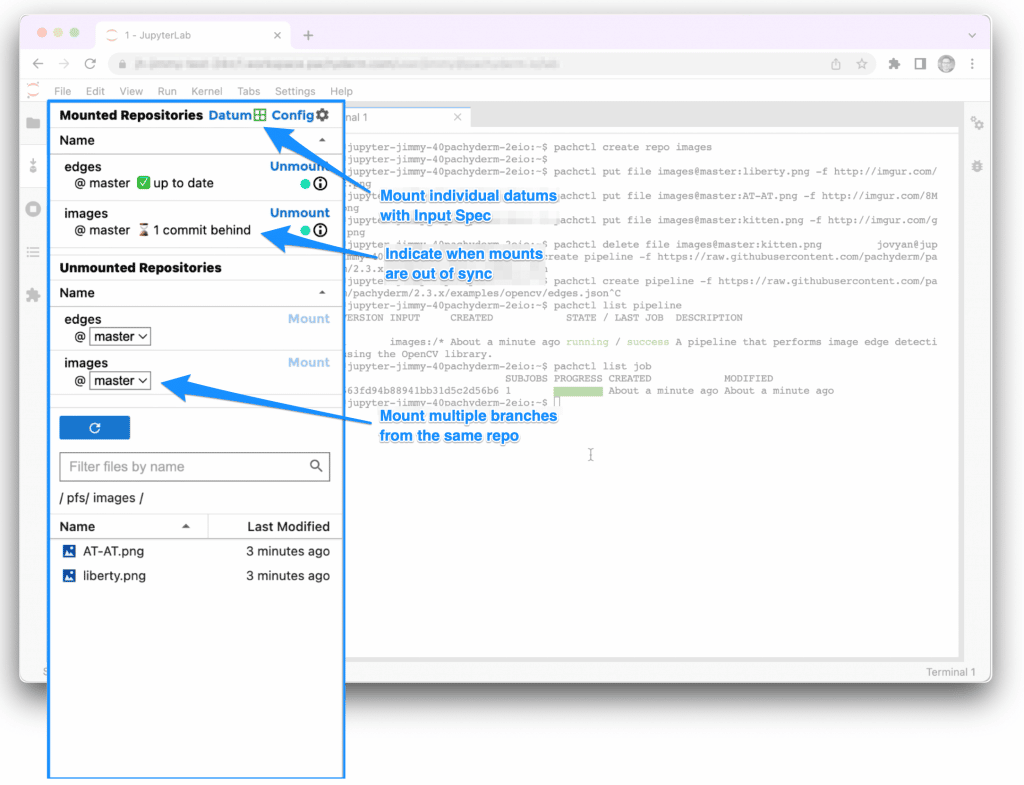

General Availability of Jupyterlab Mount Extension

Back in March, we announced the beta launch of our JupyterLab Pachyderm Mount Extension. The goal of the extension was simple – make it feel like versioned data in Pachyderm is on your computer. This feature has been hugely useful for experimenting with and understanding your data in a Jupyter notebook.

In the newest release, we take the data experience one step further. We want to simulate Pachyderm’s pipeline environment in JupyterLab. Prototyping against the data your pipeline will see, simplifies the development process immensely. And this is what we’ve done, adding support for mounting datums.

This integration saves you time and allows less technical users to access versioned datasets in notebooks. Read more in our docs.

Summary

We constantly endeavor to increase the value we provide to customers — release 2.4 is the most performant and scalable release yet. Customers can reduce their data processing time by up to 40%, which in turn can directly reduce cloud spend. The bigger and more complex the workload, the better the savings.

The features in release 2.4 enable use cases that were previously cost prohibitive and reduces the time spent in debugging pipelines, resulting in increased productivity. Integration with JupyterLab simplifies access to data and development. For a comprehensive list of features, read the release notes or register for our release webinar.

Are you new to Pachyderm and searching for a data pipelining or MLOps solution? Take the next step and schedule a demo tailored to your environment.