You may have discovered that machine learning (ML) models are never really ‘Done’. Instead, the ML lifecycle is a continuous process of adding more data, tweaking the model, and constant evaluation. Two crucial elements drive this cycle: your dataset needs to have a significant amount of data, sometimes petabytes of data, to train the model properly. In addition, identifying which algorithms work best can only be found through repetitive experimentation.

Ingesting large datasets and prepping the data for complex data transformations all require time. This is especially true with unstructured data such as video and images because the datasets are often enormous. The time required for running and re-running these projects can be prohibitive, which often means less experimentation and smaller datasets. That’s an antipattern that goes against the iterative nature of the ML lifecycle. The answer to this conundrum is parallel or distributed processing, which allows you to process petabytes or more of data rapidly.

With parallelization, you will be able to gather and process information within datasets and entire pipelines more quickly. More importantly, you can increase experimentation and use all your data to train your model rather than just a subset of data.

We can explore this further by diving into some key concepts:

What is Parallel Processing or Parallelization?

Let’s first start by examining serial processing, the default methodology. Typically, a workload consists of a set of jobs. These jobs are executed in sequence, one after the other to complete the workload. If we have a single worker, completing these jobs in sequence, then this is serial processing.

The challenge with serial processing is that there’s only so much you can do on one machine, even the largest, high-powered machines available. Given the enormous amount of data used in machine learning, sequentially running through all the jobs in a workload could easily take weeks to complete with serial processing.

With parallel processing, a job itself is broken up into multiple, smaller chunks of work, each of which is able to be processed individually. Multiple workers can then be used to process each chunk of work at the same job, starting in sync and ending in sync.

Think about an F1 race. In a pit stop you could have a person change each wheel on the car, one after the other. But it’s much more efficient to use parallel processing and have 4 people, one to change each wheel, to complete the job much faster.

It can take a significant amount of time and effort to curate the datasets you want to use in a data science project. If a job takes 100 hours with one worker, it will take 50 hours with 2 workers, 33 hours with 3 workers, and so on. The time saved enables you to iterate faster and more often. Given that data scientists are expensive resources, time saved is money in the bank because they’re not waiting for pipelines to finish. Better ML models allow your organization to be more competitive, deliver beloved customer experiences, or optimize clinical outcomes.

Doesn’t Kubernetes automatically support parallelization?

The short answer is no! While Kubernetes is the most popular container orchestration tool with many features such as autoscaling, this is not the same as parallelization. Autoscaling allows Kubernetes to spin up multiple copies of the user’s code but it has no mechanism to split up the data processing.

Jobs that have no dependency on each other can be executed concurrently. This means multiple jobs can be executed simultaneously with each job assigned to a worker. Autoscaling in Kubernetes enables concurrency, it can scale the number of pods or workers that are processing concurrent jobs. Concurrent processing is similar to real-life multitasking, jobs don’t need to be started or completed at the same time, they just overlap with each other.

Let’s examine concurrency from the worker angle. Kubernetes can spin up workers to manage concurrent pods/jobs but each worker is only working on one job at a time. With parallel processing, each worker can be working on a portion of the same job, resulting in enormous scalability.

How Pachyderm delivers scalability

Normally, you need to change your code to enable parallel processing because by default, code is single-threaded or serially processed. Parallel processing requires hard coding how the data would be chunked, processed, and reassembled. Pachyderm is a data pipelining and ML operations solution that natively supports parallel and concurrent processing.

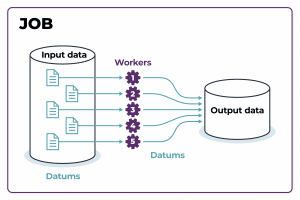

As shown in the image below, Pachyderm shards or chunks up the data into datums. Because a datum is a single unit of work, it can be processed in isolation. A datum is user defined and can be a single database record, an image, single or multiple files. This has a powerful scalability benefit because datums break up a job. When any datum can be processed in parallel with the others, the work can be allocated to any available workers.

In parallel processing, a job is distributed amongst multiple workers

Pachyderm leverages the underlying Kubernetes layer to orchestrate your jobs concurrently, processing your data in parallel. As a result, users have access to unprecedented scale with both parallel and concurrent processing while not having to add complex logic to their codebase.

Conclusion

Parallel processing isn’t required for every ML workload, you won’t see much performance gains with smaller datasets and simple transformations. However, If you’re doing intense data processing or complex machine learning workflows, parallelism offers powerful scalability. With the ever growing size of datasets, and the desire to use as much data as possible for training, parallel processing has become a must have for today’s organizations. Pachyderm natively supports parallel and concurrent processing, reducing the time required to run pipelines and iterate on ML models, so you can identify and optimize your champion model faster.