The term big data to describe large volumes, variety and velocity of data is no longer in vogue because everyone has a “big data” problem. Today we just refer to it as data.

Enterprise companies are built and run on a foundation of collected and self-generated data that is siloed, duplicated, and fragmented. For data teams looking to build analytics and machine learning applications leveraging this data, implementing a working machine learning model is often less daunting than the complexity of managing, cleaning and accessing the data necessary for their projects.

In many cases, the challenges built into working with enterprise data are related to the fragility of data: once it’s been removed from its context, duplicated, moved, or revised, valuable datasets can become buggy and unreliable.

There are many paths to resilient data operations. At Pachyderm, we’re focused on building an automated data pipeline layer that provides immutable versioning and lineage for critical production data. Access to the full transformation history of the data and code used in your data pipelines for ETL, Analytics or Machine Learning helps eliminate the barriers to data access that can lead big data projects to fail. This article describes some industries where terabytes of data are being generated every second, how it can be used, and some of the barriers that can jeopardize the success of data operations teams.

Autonomous Vehicles Combining Sensor, Streaming and Computer Vision Data

Anyone with a long commute has daydreamed about a car that could get them to work on autopilot. As the technology and hardware for self-driving vehicles has matured, autonomous models are being pursued by automakers around the world. This is a highly technical and precise challenge, requiring accurate and high-quality data processing in order to safely drive cars on real roads with a combination of human- and machine-powered vehicles.

What Data Does a Self-Driving Car Need?

Human pilots are processing visual, auditory, memory, and decision-making data. Autonomous vehicles are processing a staggering amount of unstructured data to perform at the same level as a human operator on the roads. Safety and accuracy are critical when operating a vehicle at highway speeds.

Data Collected by Autonomous Vehicles includes:

Radar and LiDar generates a three-dimensional map of the car’s immediate surroundings, helping drivers avoid collisions in poor weather conditions or heavy traffic.

Camera and Video devices on a vehicle are used for navigation, driver assistance, and collision detection. Any photographer can tell you video data adds up fast – for autonomous vehicles, cameras can generate 500-3500 Mbit/s of images and video.

Ultrasonic sensors and transmitters can be used to aid in automated parking functions, object detection, and is also being explored for vehicle-to-vehicle communication that can improve road safety for vehicles and pedestrians.

GPS With so many challenges to successfully navigating the roads, accurate data is precious. Machine learning and massive datasets drive accurate mapping and navigation with companies like Toyota’s Woven Planet, building maps from satellite and aerial images.

All of this is on top of the regular telemetry and diagnostic data processed by modern cars. A single car can generate 25GB in just one hour of driving.

Where Does that Data Go?

Test fleets for autonomous vehicles are collecting this data for product and safety improvement and regulatory approval. In order to test and develop an autonomous vehicle and bring it to market, real world testing has to be accurate. With the market heating up, the teams developing these technologies need fast, stable data processing infrastructure to power the machine learning applications that make these vehicles possible.

Once transmitted back to their servers, vehicle data must be standardized and cleaned before being sent to a model, meaning that data transits multiple DAGs before it is ready to be used to retrain the latest version of the object detection model.

With so many challenges to successfully navigating the roads, accuracy is mission-critical. Machine learning and massive datasets are complex. Automated version control that helps these teams balance and minimize processing workloads makes it easier to process and deliver the right data at the right time, and understand the full lineage of the data that influenced your production models. That’s why powerful diff-based processing and automatic version control are a must-have in this growing sector: it provides a more complete understanding of how data is being transformed, resulting in a reproducible foundation for continued innovation.

Building Better Operations and Outcomes for Healthcare

In healthcare, life sciences and bioinformatics, there is a long history of combining rich data from many sources to assist individual patients, understand patient populations, and develop new treatment options. Healthcare is also a sector with legacy technologies running critical functions, and institutions struggle to update legacy data infrastructure in a way that is effective, without compromising patient care.

Moving the Right Data at the Right Time in Healthcare

Within hospitals and similar healthcare institutions, maintaining patient records while complying with state and federal privacy regulation can mean many things: some systems rely on paper and on-premise software, while others use software like Epic to help manage patient records, scheduling, and communication.

These institutions face a new challenge: there is a regulatory need to modernize data infrastructure in healthcare as legislation requires interoperability for patient record data across organizations using highly specialized software to track care reports, imaging data, manage specialized medication protocols like insulin dosing, prescription history, and more. As the healthcare software landscape grows, the need to access and blend data from many sources will only increase.

With interoperability, healthcare organizations can also look at this data in the aggregate: software and technology are being used to improve the effectiveness of medical imaging with computer vision for detecting cancers, and providers are analyzing trends in patient care data in order to build more effective treatment plans for the future.

When working with massive quantities of sensitive data, security and information control are key – this is one reason why healthcare organizations are reluctant to migrate their data operations to the cloud. Instead, they want complete autonomy of their data running in their data centers or virtual private clouds.

In addition, regulatory control is forcing organizations to keep complete audit trails of what data was accessed, when and by whom, and how to show how a particular ML model was created. Tooling that creates a full version history back to the provenance of your data means that results can be audited and reproduced without unnecessary duplication.

Lineage Matters for Machine Learning in Healthcare

Health and medicine are professions rooted in science. As sciences, these practices are based in making decisions based on data and evidence. When working with massive datasets, organizations need a strong reason to trust the data if it is going to be used in making decisions about treatment and facility management.

Building Trustworthy Datasets for Healthcare and Life Sciences

There are many opportunities to improve the quality and integrity of data used in healthcare systems in ways that will reduce costs and improve patient outcomes:

- Breaking Down Data Silos. Siloed data is a top-ranking obstacle for most enterprise data operations teams. In healthcare, it helps to diversify the populations sampled, and build a stronger basis for the value of predictive analytics and facility monitoring applications.

- Improving Data Delivery & Experiences. Data operations is often a balancing act when it comes to speed and accuracy, but there are infrastructure gains that can be made within systems to improve speed without compromising accuracy, like incrementality and parallel processing.

- Tracing the Lineage of Your Data. Having a data operations platform that can track the chain of custody of your data as it moves through the systems used in your institution makes your data auditable, and your reporting and analytics repeatable.

When investing the time and budget required to process massive amounts of healthcare data, trust is paramount. Reducing silos, improving speed and maintaining confidence with a data-versioned pipeline tool like Pachyderm helps teams build great machine learning applications in healthcare.

Delivering the Right Data at the Right Time for Finance

Financial data has a steep value curve: in stock markets, currency, and valuations, the older your data is, the less value it brings you. The need to ingest and process streaming data at lightning-speed is essential for investors, banks and finance managers. These data workloads can also be broad in scope, requiring hundreds of pipelines to access and deliver critical financial data to analytics and reporting dashboards.

Speed of Delivery is Critical for Finance Data Pipelines

Monitoring markets and facilitating customer requests means financial firms are ingesting and sorting streaming data on a consistent basis. When one stock market closes, the market in another country opens for trading. Following the transactions and values for hundreds of stocks throughout the day takes a lot of compute – and it needs to be done fast. These data sources are put to work for various purposes when it comes to managing investments, tracking stocks, and monitoring accounts.

- Optimizing Portfolios and Algorithmic Trading: Predictive analytics and machine learning can be used to facilitate trades and portfolio investment spreads by managing market trend data combined with a set of trading parameters for a given investment style, industry focus, and any other data an investor wants to measure and control with automation and machine learning.

- Fraud Detection: Financial systems are sensitive to fraud, and it is a frequent threat thanks to the ease of automation, the prevalence of hacking, and the risk of security breaches. Comparing transactions and behavior to detect anomalies is a frequent application of machine learning in finance, and helps protect investors and management firms by alerting security teams to suspicious activity by processing high volumes of information.

- Risk Analysis: Machine learning is being applied to help financial institutions navigate regulatory changes. Compliance issues like stress testing to ensure banks can withstand market fluctuations and regulatory requirements to ensure that fair lending practices are followed can be automated with machine learning. By introducing data lineage to risk analysis ML workflows, these compliance functions can be automated while keeping data transformations fully auditable.

The streaming data used for these functions doesn’t all need to be saved, but that data does need to be transformed fast. Cleaning and standardizing incoming data, and capturing the right historical data can drive better insight tools for analysts and investors. Financial services teams have two critical needs due to the unique nature of the business and its regulatory foundation:

- Real-time data transformation. Standardizing structured and unstructured data as it comes in from outside sources is one domain where time efficiency can drive big rewards. Being able to make data-driven transformation pipelines to add new information to dashboards and internal tools at a rapid pace drives better decision-making.

- Automated lineage. Tracking the full history of data transformation back to its origin is critical for building reproducible and fully auditable data pipelines in fintech. Without lineage, your data lacks the historical context needed to verify its accuracy.

The way money has been managed, and the decision-making process around that money, is also important to understand for analysts, forecasters, reporting, and regulators. Having a complete history of a financial data pipeline makes a finance organization more trustworthy, more effective, and – ideally – more successful.

Scaling Positive Customer Experiences

Customer service and building great brand experiences is hard to scale, and the mistakes brought on by misfiring automations or poorly trained teams can go viral and hurt your brand, fast. The ways that consumer-focused brands are improving customer experience with big data analytics and machine learning has been growing fast.

How Customer-Focused Brands Use Big Data

- Manufacturing and Quality Control: Building great customer experiences starts with building a high-quality product, and consumer brands can use computer vision, synthetic data, and augmented datasets can be used to identify quality defects and cosmetic issues earlier in the manufacturing process, with better accuracy.

- Streamlining Communications: The post-purchase process is data-driven, too – communicating about returns, product issues, and ongoing support is being automated with conversational AI based on natural language processing that helps companies scale their customer communication and automate repetitive tasks like returns and exchanges. NLP is an especially complex field: customers are using slang, regional language variations, misspellings, and unusual sentence structures, so conversational language models have to be flexible and accurate.

As long as these functions work for your customers – great! But there can be times when recommendations and responses aren’t appropriate for the situation. In that case, datasets and models need to be reassessed and adjusted by data scientists and engineers. In these situations, a comprehensive version history for your data helps machine learning teams pinpoint issues, build new experiments, and publish a retrained model in less time, with less overhead.

Modern Enterprise Lives and Dies by Data

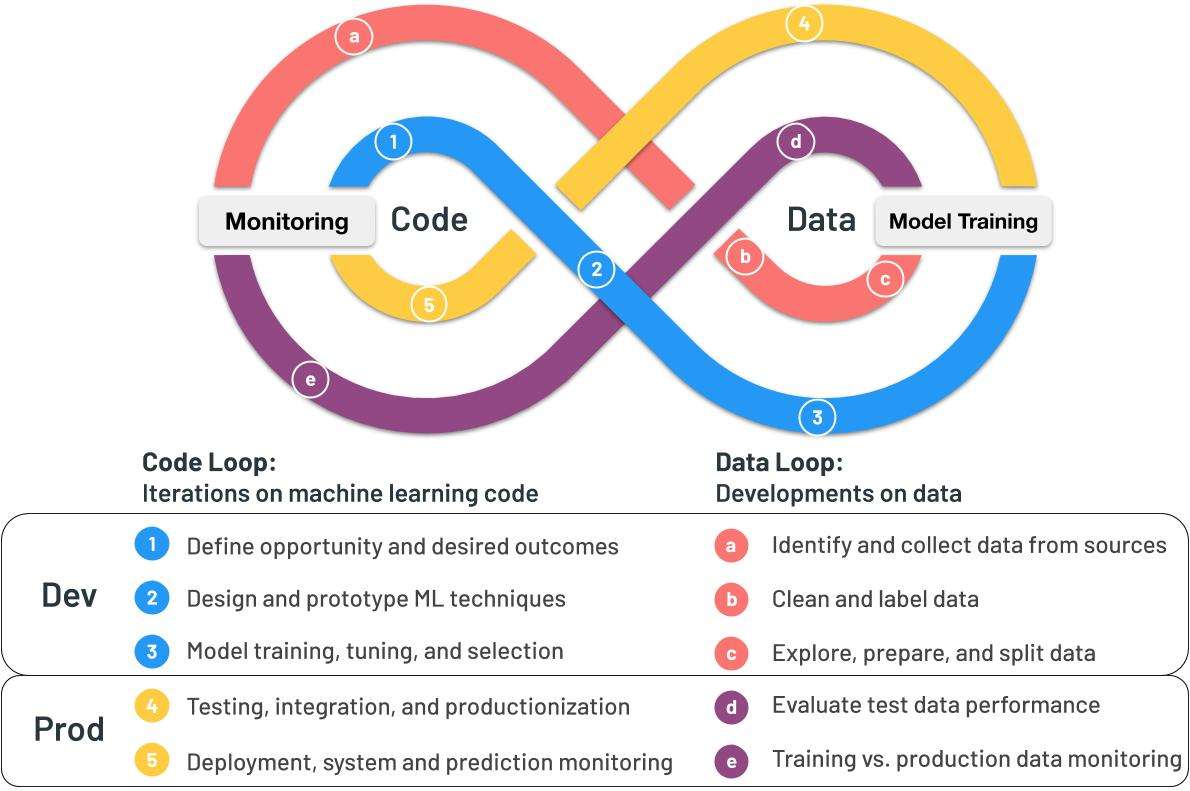

New machine learning use cases are emerging for industries at a rapid pace, but early implementation of machine learning can include hard lessons about the operational challenges of the code-data loop, the confusing outcomes of problems like data drift, and the troubles teams face when publishing models to production.

With engineering-focused tools built for complex data transformation, Pachyderm supports resilient MLOps with automated version control and data-driven pipelines that can transform any data with any code.

Ready to tackle some big data challenges? Contact the Pachyderm team to book a custom demo.