Data science is a complex, iterative process relying on large datasets to create accurate predictions. Even if you are taking a data-centric AI approach, your “right-size” dataset needs to have a significant amount of data, sometimes terabytes of data, to train your model properly. Between iterating your dataset and training a model to prepare it for production, the processing costs of re-running these processes on your total dataset every time can add up – that is, unless you have the right tools on your side. That’s where the concept of parallel processing comes into play.

Experimentation and iteration are at the heart of building and testing machine learning applications. Between tweaking ML models, supplementing datasets, data labeling, and any other actions you have to make, you will constantly be making small iterations to your data pipelines. All of these actions require an intense amount of compute and storage.

By utilizing parallelization, you will be able to gather and process information within datasets and entire pipelines more quickly and save you money by using fewer resources. More importantly, you can use all your data to train your model rather than a subset.

In this article, we’re going to take a closer look at parallelization for machine learning workflows. Specifically, we’ll go over:

- Why parallelize your data processing?

- Parallelization in Practice

- Digging Deeper: How Parallelization Architecture Impacts MLOps

Why Parallelize Your Data Processing?

Modern enterprise companies are creating and storing new data at staggering rates – gigabytes per day and up. Many of these files can provide value to your company, but only if your teams are able to deliver actionable reporting and predictions while the data is fresh and relevant.

Parallelization is a way to reduce a time-consuming stage of the data pipeline workflow: The raw computing power needed to do the job at hand.

There was a time when Parallelization represented a new paradigm in computing. Before file sizes reached the scale they are now, and the quantities of data to be handled at a given time were more manageable, data would be processed sequentially: processing resources could work together, but only on one job at a time.

If the bees in this photo were sequential workers assigned the task to pollinate a meadow, they would need to pollinate this flower, then fly to the next one together, moving from job to job until it was complete.

2 workers processing 1 job

If you think about the scale of a garden, that could soon become a problem: the jobs themselves are easy, but you cannot speed up the job by recruiting more bees to do the work if they’re limited to only working on one flower at a time – you’ll start to see diminishing returns pretty fast.

Parallelizing the work, on the other hand, allows the workers to distribute the work among themselves. These bees are able to work on multiple jobs at the same time:

2 workers processing 2 jobs in parallel

When the work can be divided among the available workers, each job gets the right number of workers assigned to it and processing at the same time.

Within a data pipeline, the principles stay the same: sequential processing jobs to append tables, standardize files, and transform data can be sped up by adding processing power, only to the extent that the active job can be processed in less time – but the remaining pipeline jobs are still waiting in line. This type of processing can be cumbersome when, like the meadow of flowers, you have hundreds to thousands of small-scale jobs moving in single-file.

When your pipeline is able to process jobs in parallel, each time your pipeline is activated there is a planning phase that analyzes and assigns all of the requested tasks to workers who can process it all concurrently. If larger datasets are introduced to your pipeline, processing time can stay stable if additional computing resources are assigned to the workload.

The other side of parallelization is the granularity with which jobs can be defined and assigned: at small scales, manually assigning parallel workers is time-consuming but possible. Once you’re working with large or consistently growing datasets, however, this allocation needs to be automated to stay sustainable. Similarly, the finer the detail you have on jobs and their allocation, the more control you have over distributing the workload evenly across your resources.

Parallelization in Practice

The approach is a simple theory: many hands make light work. Hadoop and Kubernetes were both engineered to enable computing networks to distribute workloads for faster, more efficient use of computing resources and shorter data processing times.

This makes your ML workflow more efficient: Pachyderm’s customers see a major reduction in the time required to process and train new data thanks to parallelization. Check out how data-driven pipeline automation and parallel processing helped RTL Nederlands!

A Tale of Two Data Approaches

Traditional data pipeline tools are heavy on connecting pipelines to models, and built from the perspective of maintaining code. This means data is treated as an afterthought: Sequentially processing jobs on whole datasets, with inadequate data versioning.

When you take the whole-dataset approach, jobs can be parallelized on different sets of data, or the set can be divided into smaller parts – but this can still be a manual process for many tools, and the diminishing returns problem remains. The results are slow processing, long waits for experiment and project results, and a backlog a mile long when the processing rate can’t keep up.

What’s the Alternative to Serial Processing Pipelines?

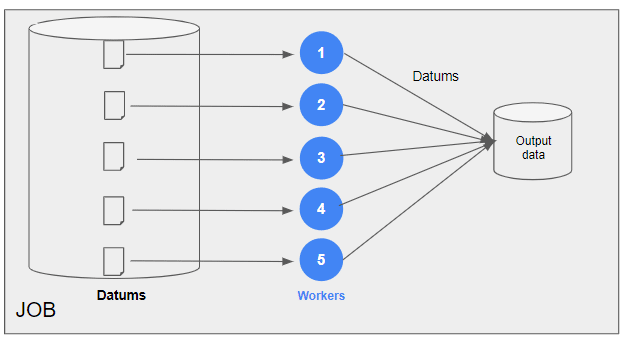

A straightforward idea that still challenges many of the pipeline and data management tools in the market today – instead of processing datasets, process datums. ‘Datum’ refers to a single file within a dataset, and the code required to transform it.

Parallelization that is able to control and plan workloads at the datum level allows greater control over your datasets. This is from a recent blog post from our co-founder Joe Doliner, about why the datum is one of Pachyderm’s foundational concepts:

“If you’re training an image classifier, then a single image is your datum. That’s the minimum amount of data you can run the training process on. If you’re training on videos, your datum is a video clip. No matter what you’re doing, some partitioning of the data will constitute a datum. Sometimes you need all of the data to do something useful, this is frequently the case if your data represents a genome. In that case, the whole thing is one big datum.

Once you understand what constitutes a datum in your workflow, what can you do with it? The first thing it gives you is a way to understand how your data hooks up to computation. You can ask questions like:

-

- How many datums do I have?

- How long does a datum take to run?

- How many new datums do we get every day?

This definition gives you a rigorous way to understand the relationship between your data and what you want to do with it. The magic of thinking about data this way is that it’s rigorous enough that the underlying system can execute it and, depending on how sophisticated it is, automatically optimize it.”

With the datum at the core of your approach to data transformation, scaling and optimizing your job speed and delivery times becomes a matter of definition: how finely-tuned do you need the incrementality for a certain dataset to be? Once it’s defined, you’re all set for your processing resources to scale up and down to match what’s needed.

While parallelization is not always necessary for smaller projects, it can be incredibly helpful when working with large datasets. Once implementing parallel processing into your data pipelines, you will be able to speed up iteration and reduce processing workloads.

Digging Deeper: How Datum-Aware Architecture Impacts MLOps

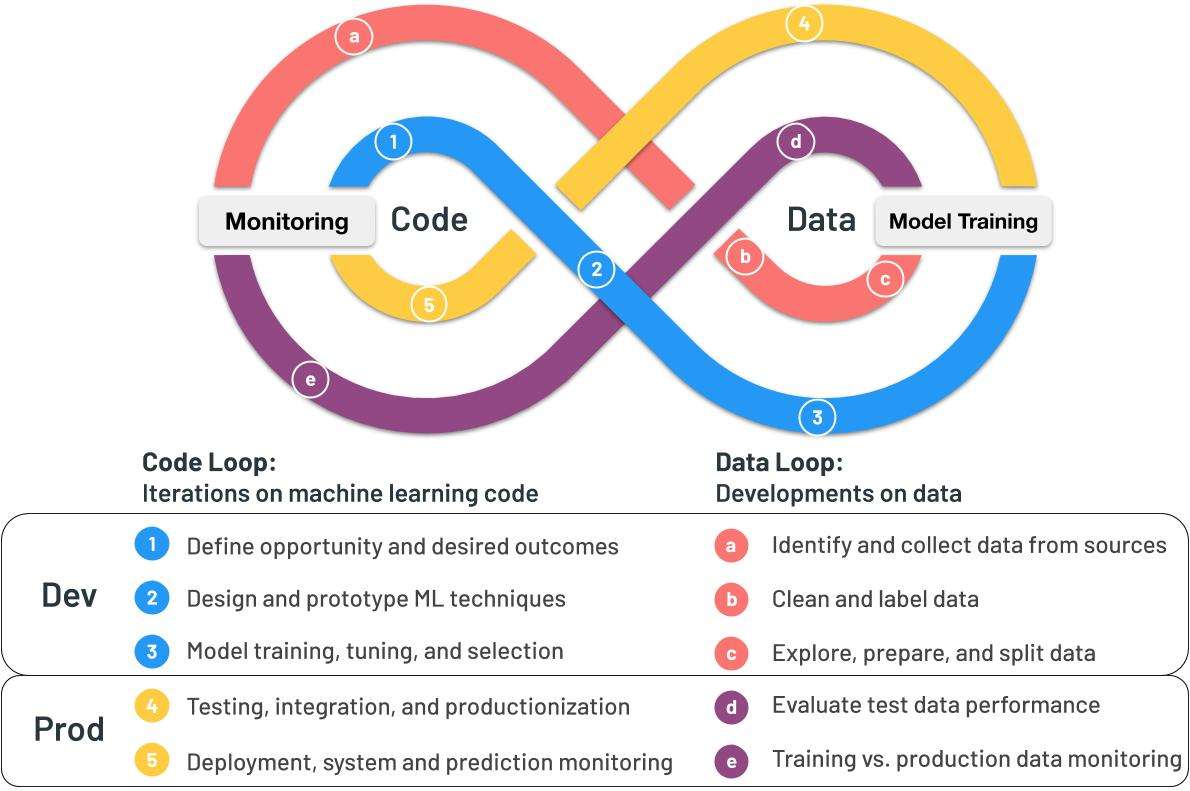

Looking back to before your data enters the pipeline, the datum-based foundation Pachyderm is built on enables teams to deeply understand their data operations at every stage of the machine learning lifecycle.

Building a strong culture of machine learning operations requires reproducibility, flexibility, and scalability. A strong data foundation is essential for building all three: without a rigorous approach to your data, it will be hard to win trust in your models.

3 Benefits of the Datum Approach for MLOps:

Reproducibility: Once the datum has been defined, Pachyderm’s file system is able to divide your dataset accordingly, and assign easy datum its own global ID. This ID becomes a full revision history of a datum’s movement through the data and code stages of the machine learning loop, allowing you to see how your data has been changed, diagnose your code, and track the effectiveness of every model, labeling, and data iteration.

Flexibility: Because Pachyderm is code- and data-agnostic, and integrates with a host of complimentary machine learning software. Your teams can process any data with the tooling that’s best for the job. This flexibility allows for more collaboration with data teams, especially if they like to use tools like Jupyter Notebooks, Label Studio, or Seldon.

Scalability: With global identifiers and finely-tuned visibility into changes to your datasets, Pachyderm lets teams scale their effectiveness through automating pipelines to process when new data is added, and scale their organizational impact by autoscaling when high-volume workloads come their way.

With the tools to build trust in the outcomes of your processes, integrate with tools that other teams are familiar and comfortable with, and deliver fast and accurate results, machine learning operations teams know they can turn to Pachyderm.

Ready to learn more about how Pachyderm can help improve your business operations? Get in touch with our engineers to learn all about our custom-tailored solutions today.