Running data operations for a business is complex, even when things are running smoothly. But if you’re using data pipeline management tools that aren’t flexible, reliable, or reproducible, it can feel like an impossible job—even for seasoned data scientists.

Using pipeline tools that can handle anything you throw at them with automated data versioning and lineage gives your team the power to iterate and experiment without worrying about reproducibility, data duplication, or sky-high cloud computing bills.

That’s why we built Pachyderm: a data-centric pipeline management system that integrates lineage for reproducible data-driven pipeline workflows. We decided to go a bit more in-depth about adding your files to our data-driven pipelines and how you can utilize them to improve processes and elevate your business. In this article, we will briefly cover:

- Manual and Automated Data Pipeline Flows in Pachyderm

- What Pachyderm Data Pipelines Look Like in Action

Manual and Automated Data Pipeline Flows in Pachyderm

Pachyderm can ingest any type of file from local, on-premise, cloud, and streaming sources to help automate processes for your business. The question is: what does that really mean? Our team created Pachyderm with different user scenarios in mind, ranging from individual data scientists running more straightforward experiments to engineers building foundational data infrastructure.

You can transform structured and unstructured data using the language that works best for your team and your project in your containers. We offer a variety of customizable solutions that range from manual to automated so that you can choose the right fit for your data needs.

Let’s dive a bit deeper into those here:

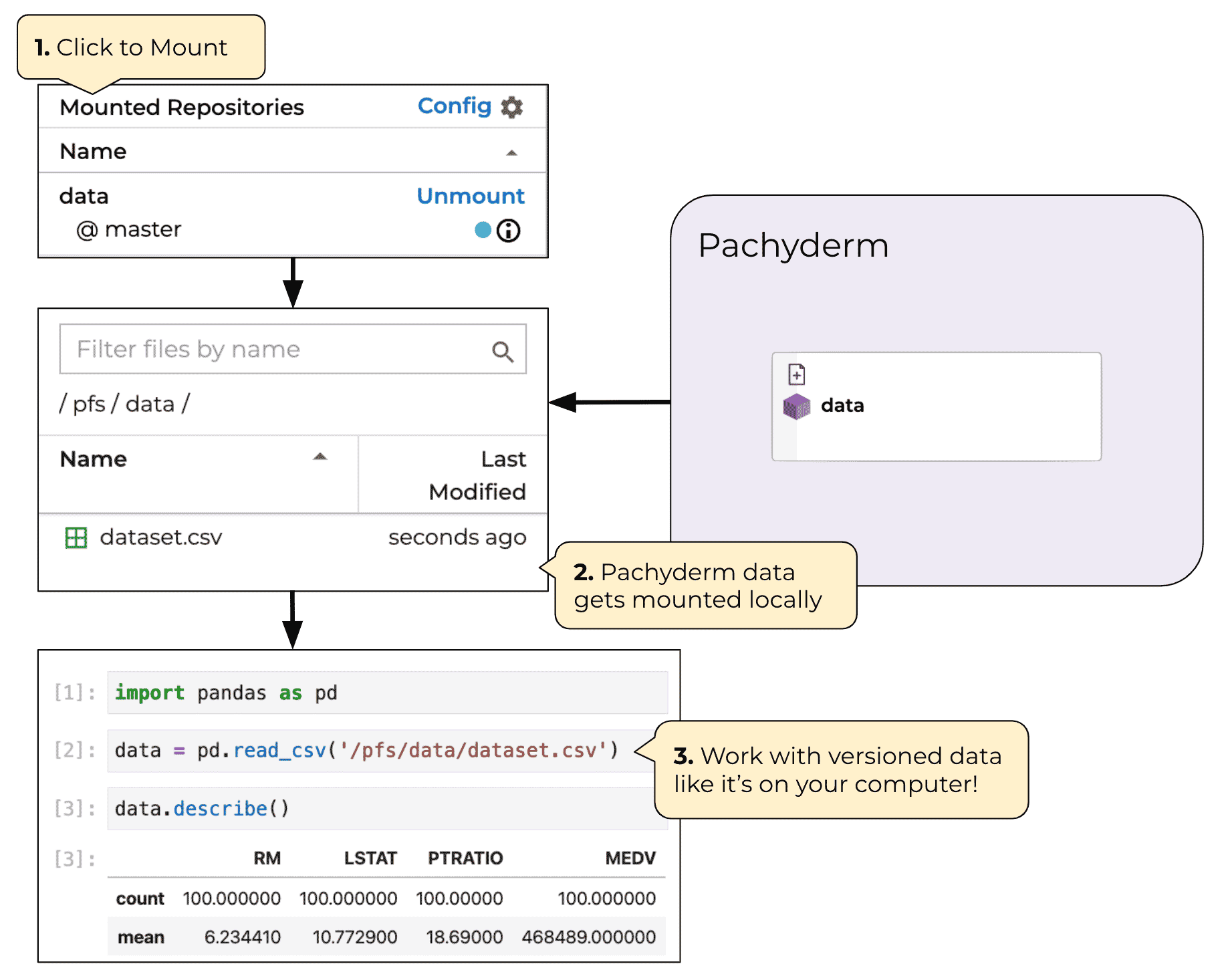

- Mount Repositories: Pachyderm mounts repositories as local filesystems through FUSE, which is useful when you want to pull data locally, review data pipeline results, or modify files directly. You’ll be able to mount a Pachyderm repo through two modes: read-only and read-write. The former allows you to read mounted files, and the latter lets you take the extra step to modify them.

- pachctl: This command allows you to put a direct file path into a Pachyderm repo from any URL or object store. You’ll be able to load your data partially or fully depending on your needs for your data pipelines.

- Pachyderm Client: Pachyderm’s pre-built Python, Go, or JavaScript SDKs allow you to interact with file storage via API. Should you need to use another language, you’ll be able to build your own with our protocol buffer API.

- S3 Gateway: Our Pachyderm S3 Gateway can be used to expose a repo to object storage like MinIO, AWS S3 CLI, and boto3. This will be helpful if you want to retrieve data or expose data to object storage tooling.

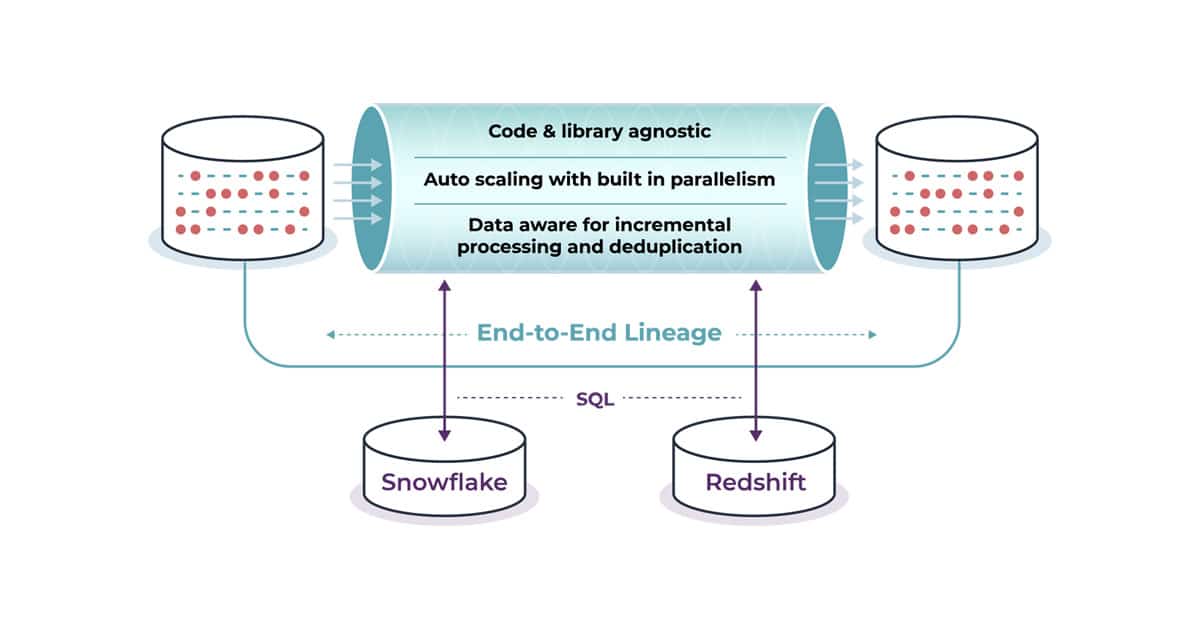

- SQL Ingest: With SQL Ingest, you can inject database content by pulling results of different queries into Pachyderm and saving it as a CSV or JSON file. This allows you to connect with a structured data warehouse and retrieve data on a regular schedule, so you can then process it with Pachyderm.

- Spouts: Spouts are pipelines that ingest streaming data from an outside source. This includes event notifications, database transaction logs, message queues, and more. These pipelines are ideal when new data inputs are sporadic instead of on a schedule.

- Cron Pipelines: These pipelines are triggered by a set time interval instead of every time new changes appear within a data pipeline. This makes collecting and analyzing data for various tasks, including scraping websites, making API calls, retrieving files from locations accessible through S3 or FTP, and more.

With the variety of options, containerized code, and Pachyderm’s format-agnostic approach to file ingest, our data-driven pipelines give data engineering teams the flexibility and power to make complex data transformations. This means data science teams can iterate fast, with tooling that won’t get in the way.

What Pachyderm Data Pipelines Look Like in Action

There are many different ways to see Pachyderm’s data pipelines in action if you look at the examples section of our website. These each show you how to get started building pipelines with different data sources, and combining batch and streaming data into a single DAG.

- Connect Pachyderm to your Snowflake Data Warehouse to speed up pipeline development. Snowflake provides a structured storage approach and separated compute for complex queries, making it ideal for creating and utilizing scalable ML models.

- You can also easily combine and transform data: read about SeerAI and how it delivers spatiotemporal data and analytics with Pachyderm.

- Pachyderm’s flexibility and data-first approach to pipelines also make it a great tool for edge computing AI applications, like the examples mentioned in our recent webinar with GTRI.

Want to see it live? Register for our October 19 webinar to walk through the basics of Pachyderm pipelines with Customer Support Engineer Brody Osterhur!

Leveraging Data Pipelines for Your Business

When it comes to utilizing ML data pipelines to improve processes within your organization, it’s important to know what you need and understand what companies like Pachyderm offer. That’s why our team wanted to take the time to explain how you can assemble data-driven pipeline flows in Pachyderm and what that means for your business.

If you’re ready to learn more, reach out to our team to discuss your business needs. You can also request a demo to take a technical deep dive into utilizing data versioning solutions for your business today.