We’re pleased to announce the release of Pachyderm 1.11! This latest release of our powerful AI/ML development platform delivers strong new features and major improvements in performance:

- A new writable mount cmd for easy notebook development

- Higher job throughput and better utilization of kubernetes resources

- Support for OIDC Auth

- 60+ bug fixes and minor improvements (full release notes)

Writable Data Mount for Improved Development Workflow

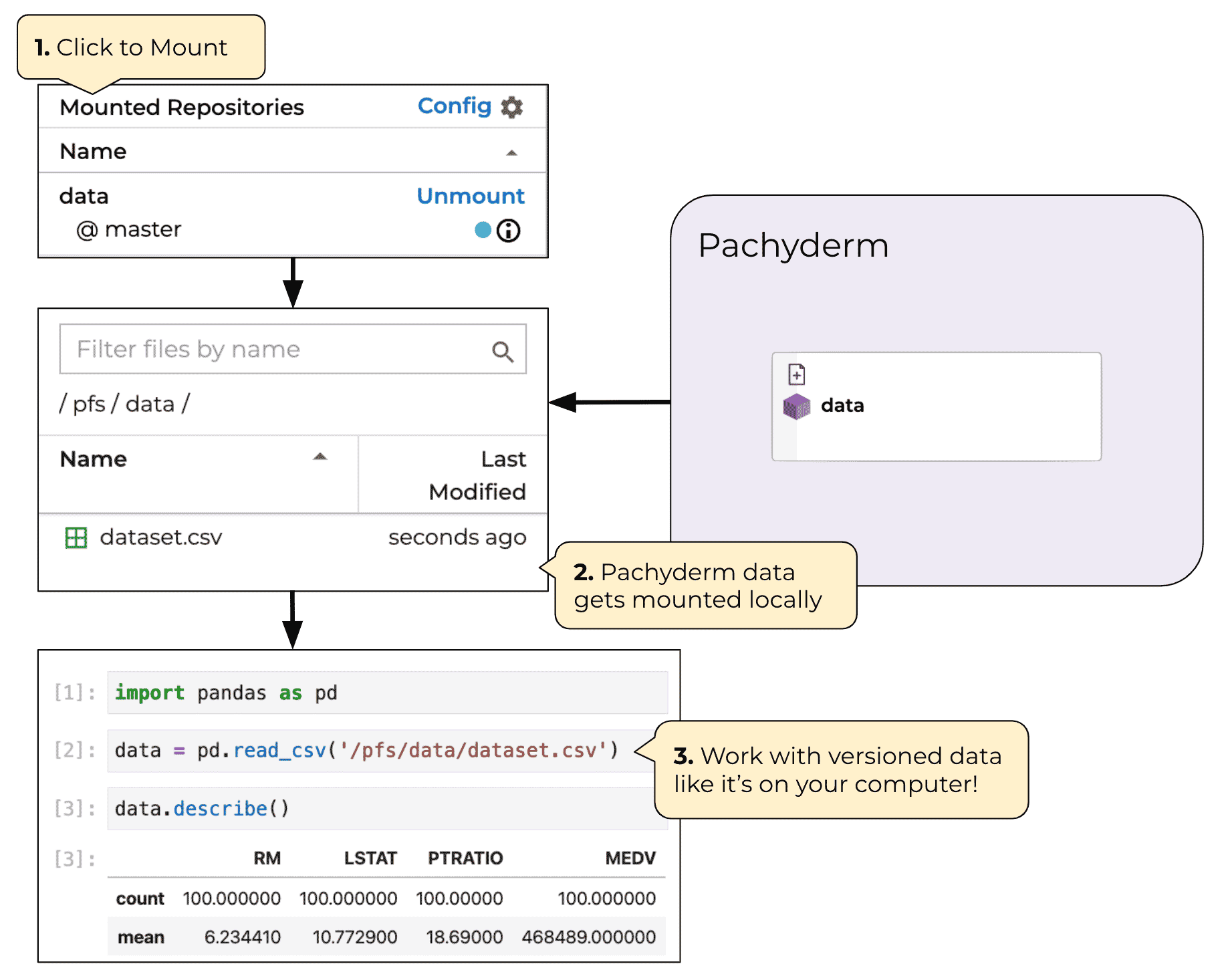

Pachyderm’s strength comes from seamless automation of data science workflows. But before getting to production, data scientists need to quickly iterate on their initial analysis steps. Pachyderm 1.10’s tighter integration with Jupyter Hub and notebooks was the beginning of our journey to significantly improve the early experimentation workflow for data scientists who want the infrastructure abstracted away. Pachyderm 1.11 data mounting improvements deliver the next step.

Pachyderm 1.11 dramatically expands the read-only functionality of

pachctl mountto give you a fully writeable mount that automatically interfaces and versions data into Pachyderm.

As a quick refresher, you use the pachctl mount command to create a local file system copy of your repos, branches and commits. That works great when you want to “check out” your data to experiment with it locally. It’s particularly well-suited to developing inside notebooks. Data gets downloaded as needed in the mount so you can mirror a repo but your code only downloads the files it tries to read. But in Pachyderm 1.10 and earlier, mount was read-only. Read-only mount works amazingly well for developing code against a fixed dataset, but it’s not designed for developing data.

Pachyderm 1.11’s new, advanced mount now adds a --write flag that allows you to dynamically develop a dataset in a commit and have that automatically pushed back to Pachyderm when you’re done. This gives you a similar workflow to Git, but for data. Pull data locally with pachctl mount --write, make changes to that data set in-place, and then push those changes as a new commit back to the central repository when you unmount.

Many ML workflows need people to do the first steps, like labeling data, annotating pictures or creating bounding boxes. In earlier versions of Pachyderm you did these steps locally or in a shared file system and then pushed that data to a Pachyderm repo. Now you can create your repo and do these critical human-in-the-loop steps while maintaining versioning and data lineage. It also makes it easy to check the data out, do some additional work to correct mislabeled data and then quickly iterate on a new model.

You can even automate these mounts and unmounts so that a notebook requests a dataset in the background. The data shows up as needed and when you close your session it creates a commit to Pachyderm automatically. This allows you to have robust data versioning without needing to directly interface with Pachyderm pipelines.

Higher Job Throughput

Pachyderm 1.11 delivers much better resource utilization so you get decreased time-to-results with zero code changes.

Data pipelines now automatically process data from more than one job at a time, while simultaneously preserving data lineage and integrity. This spreads load across your Kubernetes cluster, allowing jobs to finish faster and more efficiently.

As an example, let’s say that you have a pipeline with parallelism set to a constant of 10. That means up to 10 datums can get processed at once. Let’s assume each datum takes 10 seconds to process and you choose to commit 6 datums for the first job, 3 datums for the second, and then 1 datum for the third.

In earlier versions of Pachyderm, a single job could run highly parallelized, but only one job per pipeline could run at a time. In our example, you’d have had to wait for the 6 datums in the first job to finish before scheduling the second job of 3 datums. Similarly, the second job would need to complete before the third job with 1 datum would get scheduled. As each job would have to run serially, unused workers would stay idle and the total processing time for the three jobs would stretch to 30 seconds total.

In Pachyderm 1.11, multiple jobs can run at the same time to utilize all available resources. The pipeline accepts the first commit and starts processing it, using up to 6 of the 10 pods available in the pipeline. Since there are 4 pods still available, Pachyderm will now grab the second job’s 3 datums and schedule those, and then the 1 remaining datum goes in the last available pod. All three jobs will get processed at the same time, finishing in about 10 seconds total. No pods stay idle, just waiting around for data. Your time-to-results is 20 seconds faster, a 67% improvement!

In addition, 1.11’s new job model would even allow later jobs to finish before earlier ones in specific scenarios where they have no datums in common. Best of all, this increased throughput doesn’t require a single code change on your part. It all happens automatically. Here’s John Karabaic from our Customer Success team to show you more:

Support for OpenID connect

In addition to the Security Assertion Markup Language (SAML) standard, Pachyderm now supports OpenID Connect, a simple identity layer that sits on top of the OAuth 2.0 protocol. OpenID Connect delivers on their design philosophy of “making simple things simple and complicated things possible” by using straightforward REST/JSON message flows that are supremely easy for developers to integrate versus other identity protocols.

It lets you connect with major certified OpenID Connect providers like Google, Microsoft, Salesforce, and PayPal, as well as anyone else that uses the standard. Most importantly, it lets developers get out of the dangerous business of storing and managing other people’s passwords. With data breaches growing dramatically every year, every little bit of extra security helps keep your applications and their integrations with Pachyderm safe.

Support for the OIDC protocol expands Pachyderm enterprise edition features and gives a choice between the two popular solutions to those organizations who prefer federated authentication with OIDC. OIDC support has been successfully tested with popular identity providers like Keycloak and Okta.

Beta Features and the Road Ahead

There are tons of exciting new features and products coming soon in 2020. A few highlights:

- Pachyderm Hub, our full hosted Pachyderm offering, is hitting General Availability next month. Pachyderm Hub takes care of all the Kubernetes and cloud infrastructure management for you, while giving you a slick new interface to manage your Pachyderm deployments. It even includes fully managed autoscaling and GPU support. Check out the beta version now for free!

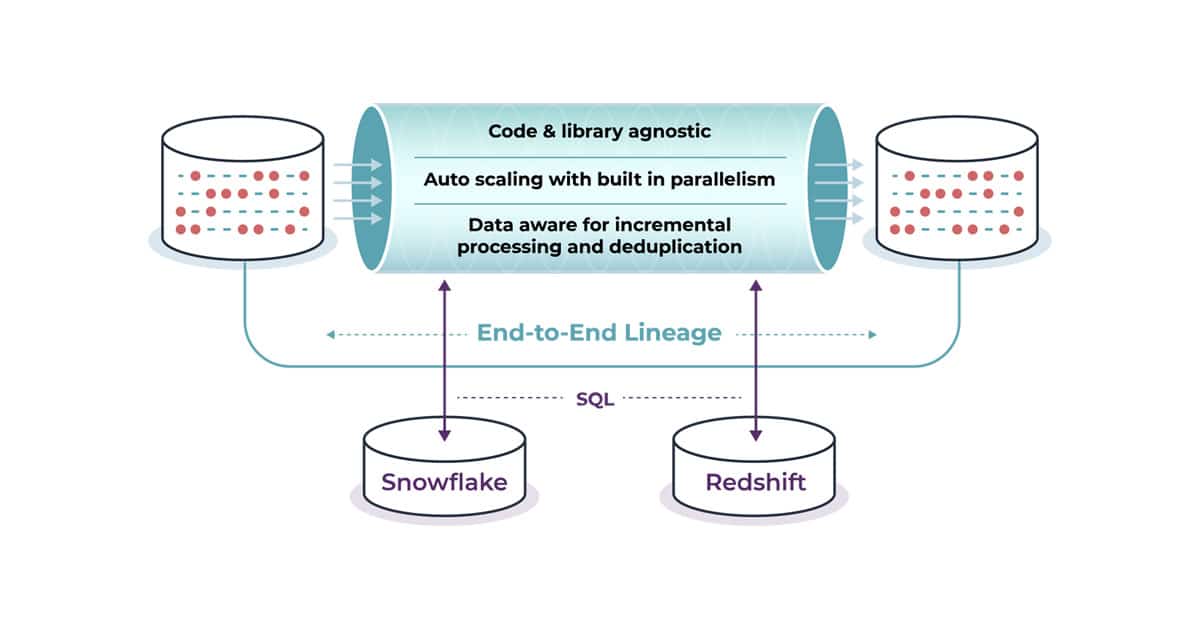

- Pachyderm 1.11 includes an exciting new feature in beta, Build pipelines. Having to build a docker image every time you change your code, push it to a registry, and then tell Pachyderm pipelines to use that new image can be cumbersome. Build pipelines streamline that process by letting you define transform.build to automate it all directly in Pachyderm. Check out the PR directly on GitHub. Full documentation will be added soon.

- Keep an eye out for Pachyderm 2.0 coming in late 2020 or early 2021. It’ll include a fully revamped storage engine, massive scalability improvements, automatic garbage collection, data retention policies, and much more!