This content refers to an old product release. For the latest integration, please read Introducing Pachyderm’s JupyterLab Mount Extension.

Pachyderm 1.10 just hit our Git repo and we’re super excited to announce support for JupyterHub. We love the way JupyterHub makes it simple for teams of data scientists to collaborate while also offering individual, sandboxed environments for development.

This is a big deal for teams of data scientists who frequently face the challenge of trying to collaborate at scale on different projects

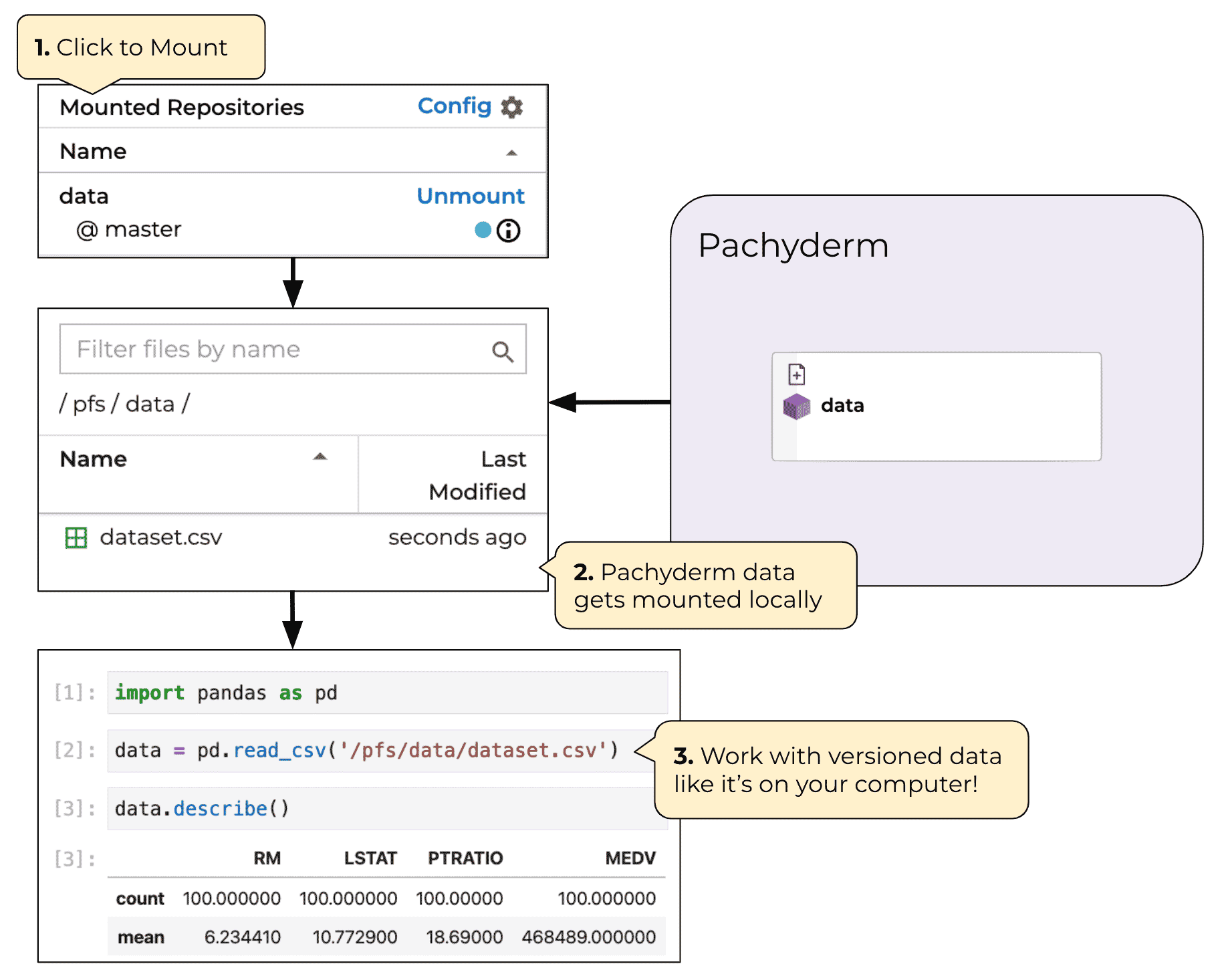

Most data scientists need no introduction to Jupyterhub. You’re already using it all the time, so let’s dig right in and take a look at how you can use it with the Pachyderm data lineage platform.

Implementing Pachyderm and JupyterHub together

In this example, you’re going to use Pachyderm: Enterprise, and you can get a FREE 30-day trial just by registering via the Pachyderm Dashboard. While spinning up a local cluster on Minikube and Docker Desktop is fast and relatively painless, Hub makes it easy to spin up a cluster in seconds.

We’ll leverage Pachyderm authentication and the Pachyderm Python client library to connect the two systems together. Once that’s done, Pachyderm authentication will take care of all the rest and you’ll be able to log into JupyterHub with your Pachyderm credentials.

From there, you and your team can run code in JupyterHub and use the python-pachyderm API to create your pipelines and version your data in Pachyderm from within the JupyterHub UI.

Prerequisites

Confirm everything with a simple pachctl enterprise get-state

Deploying JupyterHub

The first thing you need to do is set up Pachyderm Auth:

pachctl auth activate

Follow the instructions provided and with just a few clicks you’ll have a working Pachyderm Auth system using the GitHub OAuth flow. This can easily be customized if you want to use another auth system like SAML, just head over to https://docs.pachyderm.com/latest/reference/pachctl/pachctl_auth/ to find out more information. You can confirm everything is set up correctly by running:

pachctl auth whoami

Next up is to deploy JupyterHub, we provide a helper script (init.py) for easing JupyterHub deployments. It sets reasonable defaults for JupyterHub, and has a few options for debugging and configuration (see ./init.py --help for details.)

Run ./init.py

If there’s a firewall between you and the kubernetes cluster, make sure to punch a hole so you can connect to port 80 on it. See cloud-specific instructions here. If you need to customize your JupyterHub deployment more than what init.py offers, see our setup guide.

Using JupyterHub

Once deployed, navigate to your JupyterHub instance:

By default, it should be reachable on port 80 of your cluster’s hostname. On minikube, navigate to one of the URLs printed out when you run minikube service proxy-public --url.

You should reach a login page; if you don’t, see the troubleshooting section.

To login to JupyterHub, simply run pachctl auth get-otp to get a fresh one time password. Leave the username blank, and enter the otp including the otp/ prefix as the password.

pachctl auth get-otp

otp/f3fe5f550bc6449abc07db0faac6c265

Once you’re logged in, you should be able to connect to the Pachyderm cluster from within a JupyterHub notebook; e.g., if Pachyderm auth is enabled, try this:

import python_pachyderm

client = python_pachyderm.Client.new_in_cluster()

print(client.who_am_i())

And there you have it! JupyterHub and Pachyderm are both deployed and ready for large-scale collaboration.

Building a basic Pachyderm pipeline in JupyterHub

Now that JupyterHub and Pachyderm are up and running. Next, you’ll walk through creating a basic Pachyderm pipeline using our simple opencv demo.

In JupyterHub, create a directory called edges and add these files to it:

- A

main.pywith these contents. Make sure that it’s a python file rather than a notebook. - A

requirements.txtwith these contents.

Together, these files represent the edges pipeline that we’ll create from JupyterHub.

Now, run this in a notebook in the directory above edges:

import os

import python_pachyderm

client = python_pachyderm.Client.new_in_cluster()

# Create a repo called images

client.create_repo("images")

# Create a pipeline specifically designed for executing python code. This

# is equivalent to the edges pipeline in the standard opencv example.

python_pachyderm.create_python_pipeline(

client,

"./edges",

python_pachyderm.Input(pfs=python_pachyderm.PFSInput(glob="/*", repo="images")),

)

# Create the montage pipeline

client.create_pipeline(

"montage",

transform=python_pachyderm.Transform(cmd=["sh"], image="v4tech/imagemagick", stdin=["montage -shadow -background SkyBlue -geometry 300x300+2+2 $(find /pfs -type f | sort) /pfs/out/montage.png"]),

input=python_pachyderm.Input(cross=[

python_pachyderm.Input(pfs=python_pachyderm.PFSInput(glob="/", repo="images")),

python_pachyderm.Input(pfs=python_pachyderm.PFSInput(glob="/", repo="edges")),

])

)

client.put_file_url("images/master", "46Q8nDz.jpg", "http://imgur.com/46Q8nDz.jpg")

with client.commit("images", "master") as commit:

client.put_file_url(commit, "g2QnNqa.jpg", "http://imgur.com/g2QnNqa.jpg")

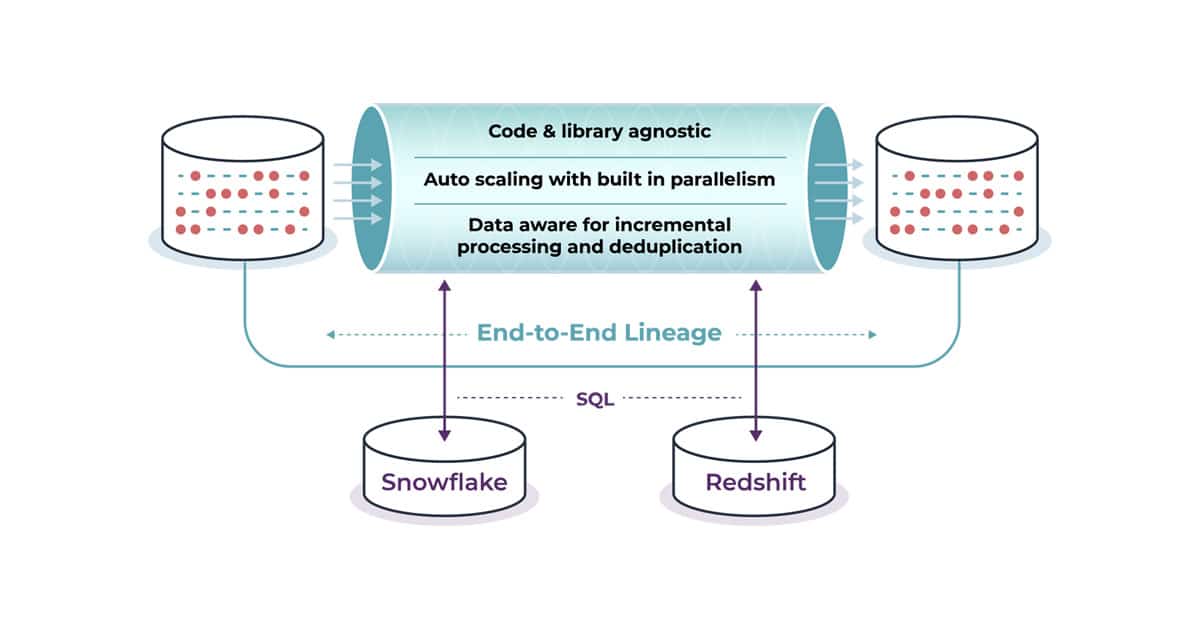

client.put_file_url(commit, "8MN9Kg0.jpg", "http://imgur.com/8MN9Kg0.jpg")The code here is pretty straight-forward and as you can see, we leverage the python-pachyderm to declare everything we need to build an end-to-end data science pipeline. Now you and your data science team can focus on what matters, and Pachyderm/JupyterHub will take care of the rest.

If you and your team want a private demo of what we’ve covered, just head over to pachyderm.com/see-pachyderm-in-action-demo and someone from our customer success team will be in touch shortly.