In our last blog, we talked about version control and how it focuses on tracking and managing changes in software development.

But what about version control in data science?

In many industries like healthcare and finance, reproducibility and model compliance are requirements for machine learning. But version control can do a lot for data science like making your experimentation faster, more reliable, and even co-ordinate changes when working with a team.

Version Control for Machine Learning

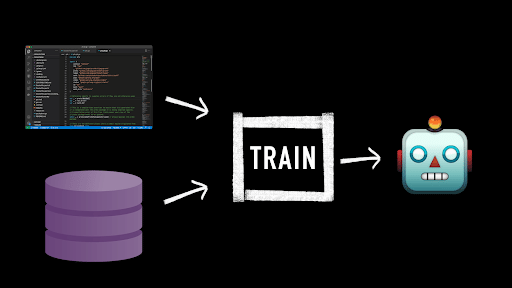

The main difference between version control for machine learning when compared to software development is that there are more moving pieces – the two main pieces being machine learning code and data.

When applying machine learning in the real world, it’s common to see development happening on both sides of the machine learning loop. We constantly incorporate new observations into our dataset to keep our model relevant, while also applying new techniques and training methods in our model development.

So what we end up with is a symbiotic relationship between our code and our data.

Manually managing the interactions of our code with our data can become unwieldy. For example, if our model is making an incorrect prediction, it could be an issue with our code or with our data. In order to investigate this, we need to be able to trace the source of this error by investigating the history of changes to our cod, configuration, as well as any changes to our data.

This is especially important when outputs need to be reproducible, because we must be able to recover the correct versions of each component that contributed to the outcome.

In data science, this version control requires a history of all the different parts that came together to create our model. This includes code, configuration (such as hyperparameters), and data. Even the versions of the libraries we’re using can have a huge impact on the outcome of our model.

Simply put, there are 3 areas that need to be considered when applying version control tools to data science.

- Code

- Data

- Pipelines (Code + Data)

Version Control for Code

As we saw in our previous video, version control for code is largely solved with tools like Git and GitHub. We can reliably version our code and work with it in a collaborative and reliable manner.

Version Control for Data

In the case of data, we also need something that can track and version our changes, but this is a very different problem. If we take a look at Git, it is specifically designed for code in the form of text files. So when it comes to data, there’s really a mismatch.

And this mismatch occurs for a few reasons. Machine learning datasets are typically:

- Bigger in size – datasets tend to be larger than code files.

- Growing and changing at an exponential rate.

- Data sources can have many different types and formats – from text to IoT, structured data to video.

- Many stages to data – for instance we may have a raw interaction from a user, but this usually undergoes a host of processing before it gets to our model training.

- Subject to privacy challenges (GDPR, CCPA, or HIPAA).

In order to find tooling that can version and manage our data, we need something that satisfies all of these requirements.

Most tooling tends to rely on object storage at some level, but we must also consider the other components of version control when evaluating tooling like storage costs, workflow, and collaboration.

Version Control for Machine Learning Pipelines

The third piece of the puzzle for reproducibility is tracking how we’re combining our data with our code. The simplest example of this is the model training process, but there are usually many stages before we get there, where we’re preprocessing or formatting our datasets to be ready for training.

These pipelines need to contain all of our code and configuration along with any library or system requirements that may be needed to transform data into the expected output.

Tracking each of these components is where the complexity begins to creep in. Usually, you have to sacrifice one or more of them with most tools, or commit to building glue software to manage the interactions yourself.

Like we saw with Git, it is the perfect library for managing our code locally, but it requires something more to manage collaboration across a team.

So the real question is: How do we version our data, while also ensuring that when code is applied to it, we can track what’s happened in a reliable and reproducible way.

Read the next post in this series: What is Data Lineage? We’ll talk about how we’re trying to solve these problems from the bottom up with Pachyderm. How we version and manage data, and how you can create reproducible pipelines that give you a full lineage of everything that’s happened.

Check out our corresponding video to learn more about Data Versioning for Data Science.