A data pipeline is essential to a data science team’s success. It helps to standardize the practices of creating machine learning models and executing in a scalable way so that everyone is on the same page.

There are many ways that data pipelines can benefit an organization, however, it’s important to understand what their purpose is and what elements are included.

What are data pipelines?

Data pipelines capture and deliver the information that’s being used in a machine learning model. It’s how a team determines what data they want to process, what needs to happen, and where it needs to go next.

In other words, a data pipeline is a set of tools that are used to extract, transform, and load data from one or more sources into a target system. It’s broken down into three steps: data sourcing, data processing, and data delivery. Here’s a breakdown of how it all works:

|

Data Sources ➡️ Cloud storage Streaming data Data Warehouse On-premises server |

Data Processing ➡️ Labeling Cleanup Filtering Transformation |

Data Delivery Next Pipeline Data Warehouse Data Lake Analytics Connector |

How are data pipelines used in machine learning?

We know that machine learning models cannot function without data. Data pipelines are the lifeblood of machine learning. They are responsible for collecting, processing, and storing data that will be used to train and test models.

To make sure that your machine learning project has a successful outcome, you need to make sure your pipeline is well maintained throughout the entire process – from start to finish. Data pipelines are the steps that connect your data to your model, and they govern all of the cleaning, labeling and processing steps along the way.

What can a data pipeline mean in ML architecture?

Functionally, a pipeline can refer to a single step or the fully connected network of pipelines needed for machine learning. A singular pipeline is a function moving data between two points in a machine learning process.

A connected pipeline, more accurately known as a directed acyclic graph (DAG) or microservice graph, can look like starting with a raw input, which is usually a text file or some other type of structured data. This input goes through one or more transformations, which will often involve cleaning, preparing and processing the input in order to extract features that are relevant for the model. These features are then fed into an algorithm to train the model, which will then generate an output prediction.

Data vs Datum

It’s important to also be able to distinguish between data and datum. A datum is a single entry, element or instance to a very large data set.

Think about it this way: if you’re training an image classifier, then a single image is your datum. That’s the minimum amount of data you can run the training process on. If you’re training on videos, your datum is a video clip. No matter what you’re doing, some partitioning of the data will constitute a datum.

Keep in mind that sometimes you need all of the data to do something useful, this is frequently the case if your data represents a genome. In that case, the whole thing is one big datum.

Read more about how datum-oriented pipelines enable faster, more effective machine learning.

What are data pipelines used for?

Data pipelines move your data through the different processes involved in machine learning. When planning your ML stack, the type of data you will be processing is key. With the proliferation of both business and consumer data, companies are faced with an ever-increasing need to store, retrieve, and analyze data in many file formats and storage locations like a data warehouse, on-premises database, data lake, etc.

The Types of Data used by Pipelines:

-

- Streaming Data is live data ingested for transformation, labeling and processing in use cases like sentiment analysis, financial forecasting, or weather prediction. It differs from traditional data because it is often not stored for future analysis, but rather processed and analyzed as it streams live.

- Structured data is tabular data, found in a database or data warehouse. It is commonly known for being highly organized so that teams can easily search, make needed changes or analyze the data.

- Unstructured data is typically rich media like long-form text, audio and video and it accounts for 80% of data in enterprises. This type of data has traditionally been difficult to manage, store and analyze because it doesn’t have a predefined format or structure. However, today new technologies such as artificial intelligence and machine learning are designed to tackle this issue by automatically organizing information.

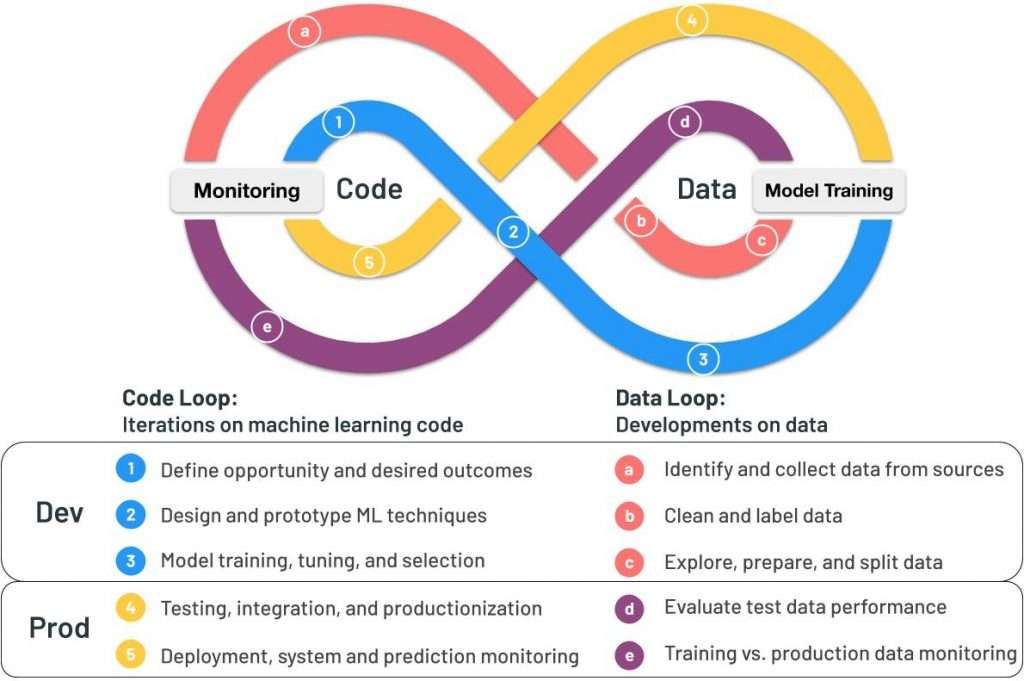

Data Processing

Every step your data takes across the code-data loop can be managed by your data pipeline. The pipeline connects to your data storage source, retrieving data based on your parameters. However, it’s critical for you to know the different phases your data can be processed in so that you can understand your data’s path through the machine learning loop.

-

- Data Ingestion – This pipeline connects to your data storage source, retrieving data based on your parameters.

- Data Transformation (ETL) – This is the phase between ingestion and delivery, where data must be prepared for your model – cleaned, standardized, labeled, tagged and transformed.

- Model Processing – This is where training, testing, and running your model in production happens.

- Model Management – This refers to storing and versioning the code used to build your ML model.

- Model Output – These are model results with lineage and versioning.

Learn more with this on-demand webinar:

Pachyderm 101: Installation and Core Concepts

How do data pipelines impact your MLOps workflow?

Data pipelines are the backbone of machine learning operations (MLOps). They are used to store and process data, and they play a key role in the development of ML models. Here are just a few ways data pipelines have an impact on your MLOps workflow.

Processing and Delivery

Data pipelines allow less processing time by making more use of available resources and unifying data from multiple different sources in real-time.

See how Pachyderm’s Spouts can ingest live streaming data for pipelines:

Cross-functional Dependencies

Containerization improves collaboration between scientists and engineers on pipeline creation. They are also structured in a way to send out alerts to specific users in order to address a problem that needs immediate attention.

Pachyderm simplifies some of these dependencies with simplified DAG creation in Pachyderm Console. Take a look:

Data-driven Pipelines

Automated data-driven pipelines that use global IDs can process new data as it arrives, unlike a more static DAG that must send all data through all steps. This helps teams make the best possible decisions without any lag of information in the process.

Lineage and Analysis

Reproducibility is the key to building internal trust and buy-in for machine learning programs. Without lineage, data hygiene is a manual and error-prone process, which is why data-driven pipelines are critical.

Data Flows Must be the Backbone of your MLOps stack

As the vehicle for moving your data, your pipelines are a critical part of your MLOps infrastructure. Building your tech stack for interoperability is key to reproducibility, and unlocks the power of parallelism for your processing.

Pachyderm automates and scales the machine learning lifecycle while guaranteeing reproducibility.