As machine learning revolutionizes the way companies do business, leaders are increasingly turning to machine learning to guide strategic decision making, from fraud detection to customer retention. Unfortunately, like many new and complex technology implementations, machine learning success can be elusive.

Gartner reports that up to 85 percent of AI projects cannot deliver as promised.

Gartner, 2018

Many factors contribute to why machine learning projects fail, stall, or never come to fruition. Common pitfalls include overly ambitious objectives, using wrong or insufficient data, and neglecting to collaborate between business and project teams.

Proper management is crucial to ensure your machine learning initiative delivers valuable results to its stakeholders—a task that goes beyond simply knowing why a machine learning project has failed. This is a team-level challenge, and the true heart of MLOps: What does it take to align teams and technology to help your organization meet and surpass its goals with technology as complex as machine learning?

Get your models to market faster and drive machine learning value with the following MLOps strategies and process improvements.

Roll Back to Stable Builds

It is common for machine learning teams to start with a seed dataset, making iterative improvements over time. Starting with the simplest model may result in the model being deployed very rapidly, in some cases.

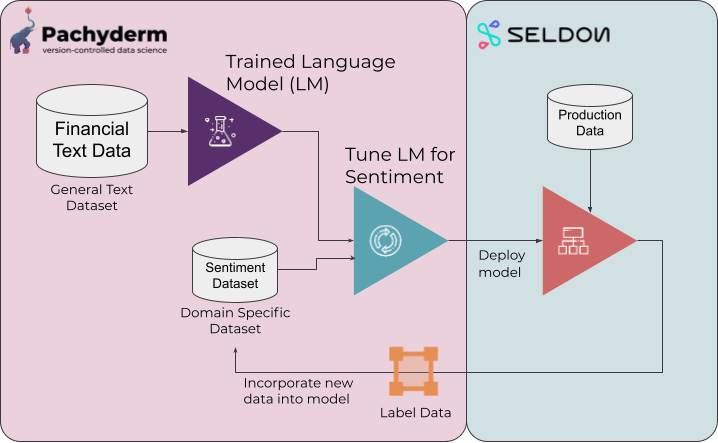

As complexity increases, the potential for bugs and other incidents rises. In a traditional codebase, version control is applied to the application so that changes can be rolled back in the event they cause downtime. When it comes to machine learning, there are two sides to the story: The code-data loop represents the continuous relationship between your machine learning model, and the datasets used for training and production.

What if you could manage your data the way you manage your code? Return to a stable state with the previously used version of the data. Pachyderm’s automated data versioning lets your project team identify the exact point where things went downhill and return to stability.

MLOps Process Improvement: Maintain versioned data history for superior model management

With the right approach to data hygiene, it doesn’t matter if your data moves or changes—the right versioning tool simplifies data management at every difference point to ensure your ML model’s reproducibility. Pachyderm automates the process of identifying and tracking changes to dynamic datasets for enterprise machine learning teams.

Use DataOps to Protect Engineering Time

Data engineers are highly sought-after professionals, and such fact becomes undeniable as AI/ML initiatives take front and center of many enterprises. So if you want your engineers to stay when they’re offered enticing opportunities, learn to manage their workflow rather than pass all machine learning-related burdens on them.

Sometimes, the culprit behind why machine learning projects fail is relying on a sole engineer for your entire machine learning program—new offers can and will cause your personnel to change over the lifecycle of your projects, and not accounting for this in the planning stages can send your new hire back to the drawing board.

Whether your machine learning program is a one-eng show or you already have a team of specialists, maintain project continuity for machine learning goals by augmenting your teams, large and small, with the best tools.

Data engineers have to balance their time and attention across both sides of the Code-Data Loop: machine learning operations require data to be fetched, ingested and processed before it can be used for experimentation and training, or interacting with your model and generating results in production. Once this process is repeating, monitoring and training drive iteration through continuous delivery.

Powering the loop requires maintaining development momentum. Time spent repairing brittle data pipelines and investigating inconsistent results comes at the expense of engineers focusing their energy on building features and models that drive value.

MLOps Process Improvement: Automate data versioning to simplify bug hunts

Pachyderm’s automated data versioning makes it easy for teams to monitor all data changes, simplifying bug diagnosis and resolution. Manual processes are time-consuming and error-prone, further adding to engineers’ workload. But with automated data versioning, your data engineers can spend more time tackling new challenges and solving problems instead of wasting energy on responding to pager incidents to resolve the same old issues.

This approach gives your engineers true ownership of machine learning projects, and makes them readily available for sudden downtime.

Invest in Momentum with Continuous Delivery

Proper model management involves balancing the investment of engineering time into upkeep and feature development. The same is true for the teams on the other end of the Code-Data Loop: your data stakeholders.

The collection and maintenance of data for business intelligence is the purview of data scientists, statisticians, and many more contributors. Limiting their ability to integrate and manage data pipelines puts these teams on the back foot and limits their ability to experiment and innovate, driving down the potential value your machine learning projects can provide to product and business outcomes.

With containerized pipelines and immutable lineage, data ingestion and delivery are more accessible to data scientists and researchers, reducing their reliance on engineering time to build and manage datasets and delivery, another element of MLOps: co-ordinating code and data management between stakeholder teams.

MLOps Process Improvement: Break down the barriers between scientists and their data

Experiments turn into products that have a tangible impact on the business. Give scientists and analysts a solid data foundation for their ML projects, no matter what language they’re used to working with. By building data pipelines with Docker containers, Pachyderm’s Enterprise Pipelines provide a code-agnostic experience for data and analysis teams. Our visual, modular approach simplifies rapid pipeline iteration for data scientists contributing to machine learning projects.

Maintain an Uptime-First Approach with Pachyderm

Many factors contribute to why machine learning projects fail, but knowing these should be your cue to taking a more proactive approach in addressing downtime. An uptime-first strategy works well, particularly when you have the right talent and tools.

Pachyderm offers some of the best-in-class data solutions for successful MLOps. Learn how it can scale your machine learning lifecycle or reduce time to value with our whitepaper.