What is data lineage?

At its most basic it’s the history of your data.

It tells us where that data comes from, where it lives and how it’s transformed over time. As AI teams pour over data looking for patterns, or process it and massage it to get it into shape for training, each of those changes could mean the difference between a powerful new algorithm or an algorithm that joins the 87% that never makes it to production.

In data science we use a lot of the same advanced tools that software developers leverage every day. Data science teams are already adept at using well known tools like Git and Github to tame their ever growing sprawl of Python and R, but as their research and projects grow, they quickly discover they need version controlled data too.

Why track lineage? The reasons are simple. Changing data changes your experiments.

If your data changes after you’ve run an experiment, you can’t reproduce that experiment. Reproducibility is utterly essential in every data science project. For continually updated models, new data can change the performance of an algorithm as it retrains. Perhaps that new influx of information has outliers, inconsistencies, or corruptions that your team couldn’t see at the outset. Suddenly a production fraud detection model is showing too many false positives and customers are calling in upset as their accounts get suspended.

Even a simple change to the underlying data can wreak havoc on reproducible data science. If all your audio files are stored in a lossless format like FLAC and then someone on the team converts them all to lossy format like MP3, will your algorithms suffer? Even worse, what if they converted those from from MIDI, which includes text data about notes and the strength and speed of those notes, to a format that doesn’t include any text metadata? Now your LSTMs or attention-based Magenta Music Transformer models have nothing to work from and you have to go back and recreate the original dataset from backup.

Version control for data has key differences from version control for traditional software development workflows. Git excels at dealing with source code text but the venerable platform is just not built to handle gigabytes or petabytes of binary data, like video and images. That means version control for data looks a little different.

A Short Glossary for Data Lineage

To understand how it’s different, first we should talk about a few different terms when it comes to managing data:

- Version control

- Data lineage

- Data provenance

In practice, they’re often used interchangeably. But they do have subtle differences that are worth thinking about.

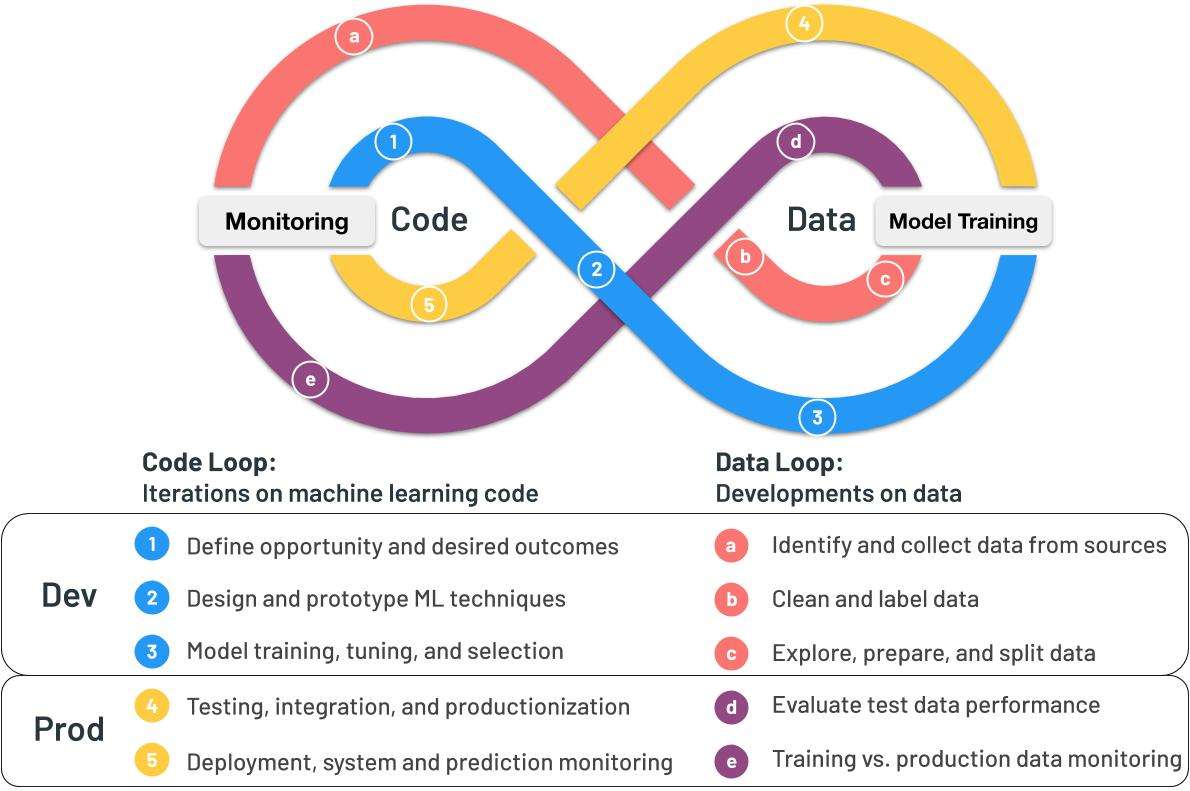

Data lineage is an umbrella term, in the same way that AI is an umbrella term that encompasses “machine learning” or “deep learning.” It describes the entire data life cycle from start to finish. It includes the origins of the data, how it’s processed, and where it moves. It describes what happens to that data as it goes through diverse processes and transformations. Understanding the history of your data gives you valuable insights into your analytics pipelines, simplifies training and reproducing experiments much easier by letting you track everything back to the root of the problem.

The word “provenance” means “the origin or earliest known history of something.” Data provenance in data science means a record of a data’s origins. As your data goes through complex transformations and workflows it’s crucial to know where it all began. Where did it come from? What database, IoT device, warehouse or web scraper gave us that data tells scientists something about the quality of that data. It also lets them trace back errors to their source. If a database owner changed fields or changed a sort in the database, it can affect the outcome of training. Data scientists need to find the root of those changes by working across business divisions to either roll back to an earlier state or incorporate those changes into their analysis.

Version controlled data means precisely what you’d expect: With a complete history of the original data and all the deltas of changes to that data made between then and now, we can roll datasets backwards and forwards in time.

Example: Maintaining Data Quality With Automated Version Control

Perhaps a scientist shrank a million images down to a smaller size to see if they could get the same quality results but with reduced training time. But as that scientist retrains the model he or she winds up with plummeting accuracy scores because those images just don’t have enough detail for robust feature extraction.

She shrinks them down again to a higher resolution and starts training again with equally poor results. Maybe she runs dozens or even a hundred different experiments with lots of different versions of the files.

After running these tests and making these transformations… which version of the files are her training runs pointed at now? Can she remember? How many copies of the data does she now have, clogging up her storage?

In the end, our scientist needs to roll back to the original file sizes to reproduce earlier results. Without strong version control it’s easy to mix up which training points go to which set of files, and it’s easy to balloon your storage bill with lots of duplicate copies of your data.,, But in all of this noise, the original results are lost because the data is lost.

Versioning Without Duplication for Data Science

Software versioning developed out of necessity over time as teams and the software they created got bigger and bigger The Linux kernel that powers everything from the cloud, to the IoT devices, to the deep learning era has over 15 million lines of code with 15,000 developers at 1,400 companies contributing to it. There is simply no way to work on a project that massive without a tool like Git to manage it all.

But it wasn’t always that way. Early programming teams started small. Sometimes a single coder could complete an entire project. A team might have five or ten coders on it, each working on a discrete part of the code base. But as software got more and more complex, version control systems evolved to meet that complexity challenge.

We’ve seen three major generations of version control systems that mirror the evolution of programming and how programmers collaborate today.

-

The first generation of version control was primitive. It had no networking capabilities and it was handled entirely with locks, which meant only one person could work on a set of files at a time.

-

The second generation allowed multiple people to collaborate on the same codebase over a local network. Most programming teams worked entirely under one roof at the time, behind the same corporate firewall, so centralized, client/server systems like Microsoft’s Source Safe and CVS (Concurrent Version Systems) dominated the field. The biggest downside of the second generation was it required coders to merge all of their code before committing it. That meant it was entirely possible for some of your files to fail to merge in and require changes before you could commit anything to the repository, which left code in a broken or unfinished state that wouldn’t compile while a developer struggled to make it all work.

-

The third generation of platforms developed as highly distributed teams, in different parts of the world and on different networks needed to work together. Modern version control systems, dominated by Subversion and Git, introduced “atomic commits” which lets developers check in all their code then merge differences with other versions later. This made the platforms infinitely more scalable.

Data lineage platforms like Pachyderm, mark the fourth generation of version control systems. They’re designed to deal with the growing challenge of version control on datasets in AI development pipelines.

The New Generation of Data Versioning for ML

Fourth generation systems handle binary data much more easily than third generation systems. While on the backend, Git stores data in its own object database, with blobs, trees, commits and annotated tags, that database can’t handle a massive amount of video, audio, images and text – the unstructured data that comprises 80% of the data being collected by modern organizations. There are a few key reasons for its challenges with larger datasets.

The first reason is that Git replicates a repository locally to a developer’s workstation or laptop, pulling it down from the Git server or a cloud service like Github. Local replication works well for most use cases, because it saves a developer when they lose networking connectivity and it reduces lag, but it’s utterly unrealistic to try to replicate gigabytes or petabytes of data locally. The network couldn’t handle it and there simply wouldn’t be enough space on a local laptop to store it all.

Pachyderm’s Approach to Unstructured Data Versioning

Pachyderm uses a central repository, backed by a high performance object store like Minio, AWS S3, or Google Cloud Storage, with its own specialized file system called the Pachyderm File System or PFS for short. Instead of mirroring all the content locally, you ingest your data once into the PFS and then work directly on the central version. You can continuously update the master branch of your repository, while experimenting with other branches.

The concept of a merge disappears in this fourth generation system. There are ways to merge binary files, but with a thousand videos or a million images that quickly becomes untenable. A human needs to step in to resolve a merge conflict in Git. They do what humans do best, resolve differences using human intuition and ingenuity. But having a human go through a thousand different images to see the differences would take days or weeks or even months. It just doesn’t scale.

There is simply no way to merge different binary files in the way you could merge text files filled with source code. If you have two digital images, one that’s a high resolution JPG and another that’s reduced by 75% it’s also nearly impossible to create a new file from the delta between them in any way that would be meaningful. Without merges, you don’t have to worry about merge conflicts and trying to resolve those conflicts later. Instead, you can branch the data, run experiments and switch between branches as needed. You can make one branch staging, another production and one experimental. If the experimental branch becomes the key branch you switch that branch to become the master by “copying” the second branch to the master, which only copies the pointers to those files and doesn’t make a second copy of the data.

Immutable Lineage for Mission-Critical Data

The second major advantage of a fourth generation version control platform is that it enforces immutability at the source of your data. Your object store becomes a single source of truth. As new data flows into the system, it’s tracked from the moment it hits the Pachyderm File System. If you have telematics data coming in from sensors in your fleet of delivery trucks, that data gets committed. Any change a data scientist makes, whether that’s converting to a different file format, deleting or merging rows in a text file, or running a color change operation on a video to highlight certain features better, Pachyderm will keep track of every moment of that history.

Automated and Efficient Version Control

The third advantage is efficiency. Pachyderm stores only the changes to data, not separate copies of each file every time it changes. That allows for snapshotting and rollback to a known good state. While developers are used to snapshots of code and the ability to return to an earlier version if something went wrong, many of today’s AI workflow systems completely lack that ability with data. The data is stored outside of the AI development pipeline in scattered databases or filesystems that don’t enforce ironclad immutability. That means if an administrator changes or moves that data early experiments are lost. Even worse, if a data scientist has to keep lots of different copies of their data, instead of just the changes between those versions, they are left with a quickly ballooning storage bill because redundant copies are scattered everywhere.

All of this adds up to a robust way to keep track of the entire history of your data from start to finish – and that’s the essence of true data lineage.

Today’s AI development teams mirror the early days of software development. Many AI teams are relatively small, with a few data scientists trading files and doing ad-hoc data version control. AI R&D teams often lack the basics we’ve come to expect in an enterprise environment, from role based access control, to snapshots, version control, logging and dashboards.

Data lineage is one of those problems an AI research team doesn’t know it has until it starts to scale. When a team is only working on one or two projects, it’s easy to keep track of things with spreadsheets, word of mouth, and Slack. But as the team grows and they take on more projects, that kind of ad-hoc system breaks down fast.

To really take control of your data and your AI development workflows you need a strong, fourth generation version control system like Pachyderm. Building on that platform lets you build for tomorrow’s challenges today.